Note 1: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Ctz

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Scheduling overhead is the extra time spent by the system or the algorithm to distribute work on multiple threads/tasks.

Back

ETH::2._Semester::PProg::Terminology

Scheduling overhead is the extra time spent by the system or the algorithm to distribute work on multiple threads/tasks.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Scheduling overhead}} is the {{c2::extra time spent by the system or the algorithm}} to distribute work on {{c3::multiple threads/tasks}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 2: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: DLk>&IUdG/

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Concurrency \(\Leftarrow\) Parallelism

Back

ETH::2._Semester::PProg::Terminology

Concurrency \(\Leftarrow\) Parallelism

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Concurrency {{c1::\(\Leftarrow\)}} Parallelism |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 3: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: DR#[(B?d4d

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A functional unit is a component of a CPU (or core) that performs a certain task, an execution unit is one such example.

Back

ETH::2._Semester::PProg::Terminology

A functional unit is a component of a CPU (or core) that performs a certain task, an execution unit is one such example.

performing a task - e.g. executing integer arithmetic operations

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::functional unit}} is a component of a CPU (or core) that {{c2::performs a certain task}}, an {{c3::execution unit}} is one such example. |

|

| Extra |

performing a task - e.g. executing integer arithmetic operations |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 4: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: DiKJq81cx0

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology PlsFix::DELETE

Events here are typically scheduler interactions causing different interleavings, but could also be, e.g. changing network latency.

Back

ETH::2._Semester::PProg::Terminology PlsFix::DELETE

Events here are typically scheduler interactions causing different interleavings, but could also be, e.g. changing network latency.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Events here are typically {{c1::scheduler interactions causing different interleavings}}, but could also be, e.g. changing network latency. |

|

Tags:

ETH::2._Semester::PProg::Terminology

PlsFix::DELETE

Note 5: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Dv[#nR{k`*

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A statement or instruction is (truly) atomic if it is executed by the CPU in a single, non-interruptible step.

Back

ETH::2._Semester::PProg::Terminology

A statement or instruction is (truly) atomic if it is executed by the CPU in a single, non-interruptible step.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A statement or instruction is {{c1::(truly) atomic}} if it is executed by the CPU in a {{c2::single, non-interruptible step}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 6: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: F@`C!Z*]zr

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Context switch overhead refers to resources required to set up an operation.

Back

ETH::2._Semester::PProg::Terminology

Context switch overhead refers to resources required to set up an operation.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Context switch overhead}} refers to {{c2::resources required to set up an operation}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 7: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: FKu}=}:F8|

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Given multiple threads, each executing a sequence of instructions, an interleaving is a sequence of instructions obtained from merging the individual sequences.

Back

ETH::2._Semester::PProg::Terminology

Given multiple threads, each executing a sequence of instructions, an interleaving is a sequence of instructions obtained from merging the individual sequences.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Given multiple threads, each executing a sequence of instructions, an {{c1::interleaving}} is {{c2::a sequence of instructions obtained from merging the individual sequences}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 8: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Fn?QX?wZyt

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A context switch denotes the action of switching a computation unit from one computation to another.

Back

ETH::2._Semester::PProg::Terminology

A context switch denotes the action of switching a computation unit from one computation to another.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::context switch}} denotes the action of {{c2::switching a computation unit from one computation to another}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 9: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: GkQ^u.{q`{

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Scalability in our context means: By how much can a program be parallelized. What is the maximum speedup that can be achieved, given an infinite amount of processors.

Back

ETH::2._Semester::PProg::Terminology

Scalability in our context means: By how much can a program be parallelized. What is the maximum speedup that can be achieved, given an infinite amount of processors.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Scalability}} in our context means: {{c2::By how much can a program be parallelized}}. What is the {{c3::maximum speedup}} that can be achieved, given an {{c4::infinite amount of processors}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 10: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Gx8iRncQ~1

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Parallelism can be specified explicitly by manually assigning tasks to threads or implicitly by using a framework that distributes tasks automatically.

Back

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Parallelism can be specified explicitly by manually assigning tasks to threads or implicitly by using a framework that distributes tasks automatically.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Parallelism can be specified {{c1::explicitly by manually assigning tasks to threads}} or {{c2::implicitly by using a framework that distributes tasks automatically}}. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

ETH::2._Semester::PProg::Terminology

Note 11: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: IExk};lu~_

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A map operates on each element of a collection independently to create a new collection of the same size.

Back

ETH::2._Semester::PProg::Terminology

A map operates on each element of a collection independently to create a new collection of the same size.

For instance vector addition that computes the sum of a collection of tuples (containing the nth element of both vectors).

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::map}} operates on {{c2::each element of a collection independently}} to create a {{c3::new collection of the same size}}. |

|

| Extra |

For instance vector addition that computes the sum of a collection of tuples (containing the nth element of both vectors). |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 12: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: IOG>^+Y+Tt

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Lockout means needlessly preventing a thread from entering a critical section.

Back

ETH::2._Semester::PProg::Terminology

Lockout means needlessly preventing a thread from entering a critical section.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Lockout}} means {{c2::needlessly preventing}} a thread from entering a critical section. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 13: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: I]N`CBnVud

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Amdahl's Law specifies the maximum amount of speedup that can be achieved for a program with a given sequential part. The pessimistic view on scalability.

Back

ETH::2._Semester::PProg::Terminology

Amdahl's Law specifies the maximum amount of speedup that can be achieved for a program with a given sequential part. The pessimistic view on scalability.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Amdahl's Law}} specifies {{c2::the maximum amount of speedup}} that can be achieved for a program with a given sequential part. The {{c3::pessimistic}} view on scalability. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 14: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Ig1]I+kiPO

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Efficiency is a relative value.

Back

ETH::2._Semester::PProg::Terminology

Efficiency is a relative value.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Efficiency is a {{c1::relative value}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 15: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Ii]YQh:cvR

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Vectorisation uses special machine code instructions to execute a single operation (e.g. plus) on a chunk of data (e.g. an array segment).

Back

ETH::2._Semester::PProg::Terminology

Vectorisation uses special machine code instructions to execute a single operation (e.g. plus) on a chunk of data (e.g. an array segment).

Can significantly improve performance.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Vectorisation}} uses special machine code instructions to execute a {{c2::single operation (e.g. plus)}} on a {{c3::chunk of data (e.g. an array segment)}}. |

|

| Extra |

Can significantly improve performance. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 16: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Ivkg+_~|E~

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

With fine granularity, work is split into small tasks. This can be parallelized more, but also adds more overhead.

Back

ETH::2._Semester::PProg::Terminology

With fine granularity, work is split into small tasks. This can be parallelized more, but also adds more overhead.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

With {{c1::fine granularity}}, work is split into {{c2::small tasks}}. This can be {{c3::parallelized more}}, but also {{c4::adds more overhead}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 17: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: J$-<~zgXtb

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A process is an independently running instance of a program/application, typically on the operating system level.

Back

ETH::2._Semester::PProg::Terminology

A process is an independently running instance of a program/application, typically on the operating system level.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::process}} is an independently running instance of a {{c2::program/application}}, typically on the {{c3::operating system level}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 18: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: J>(cyk?,KH

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Mutual exclusion means preventing more than one thread from being in a critical section, i.e. to execute a piece of code, at a given moment in time.

Back

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Mutual exclusion means preventing more than one thread from being in a critical section, i.e. to execute a piece of code, at a given moment in time.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Mutual exclusion}} means preventing {{c2::more than one thread from being in a critical section, i.e. to execute a piece of code, at a given moment in time}}. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

ETH::2._Semester::PProg::Terminology

Note 19: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: J?RC.oYL|l

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Synchronisation is some form of orchestration via threads.

Back

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Synchronisation is some form of orchestration via threads.

Typically used to prevent bad interleavings.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Synchronisation}} is {{c2::some form of orchestration via threads}}. |

|

| Extra |

Typically used to prevent bad interleavings. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

ETH::2._Semester::PProg::Terminology

Note 20: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: Jg[_~/e65f

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

What does multithreading mean?

Back

ETH::2._Semester::PProg::Terminology

What does multithreading mean?

Threads running in parallel.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What does multithreading mean? |

|

| Back |

Threads running in parallel. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 21: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: JxOZtN8PCU

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Efficiency = {{c2::\(\frac{S_p}{p}\)}}

Back

ETH::2._Semester::PProg::Terminology

Efficiency = {{c2::\(\frac{S_p}{p}\)}}

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Efficiency}} = {{c2::\(\frac{S_p}{p}\)}} |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 22: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: K,fisLmPeW

deleted

Deleted Note

Front

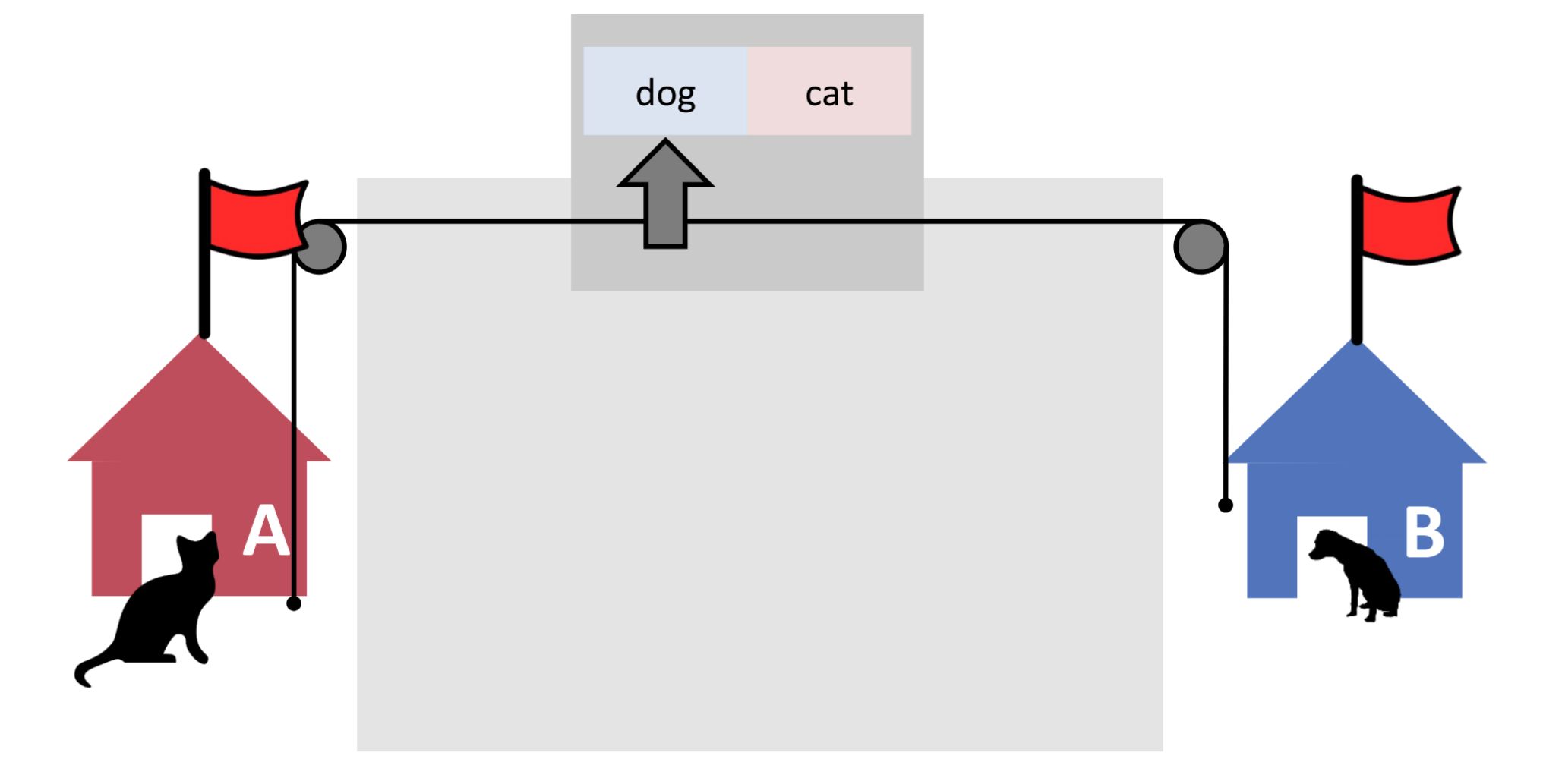

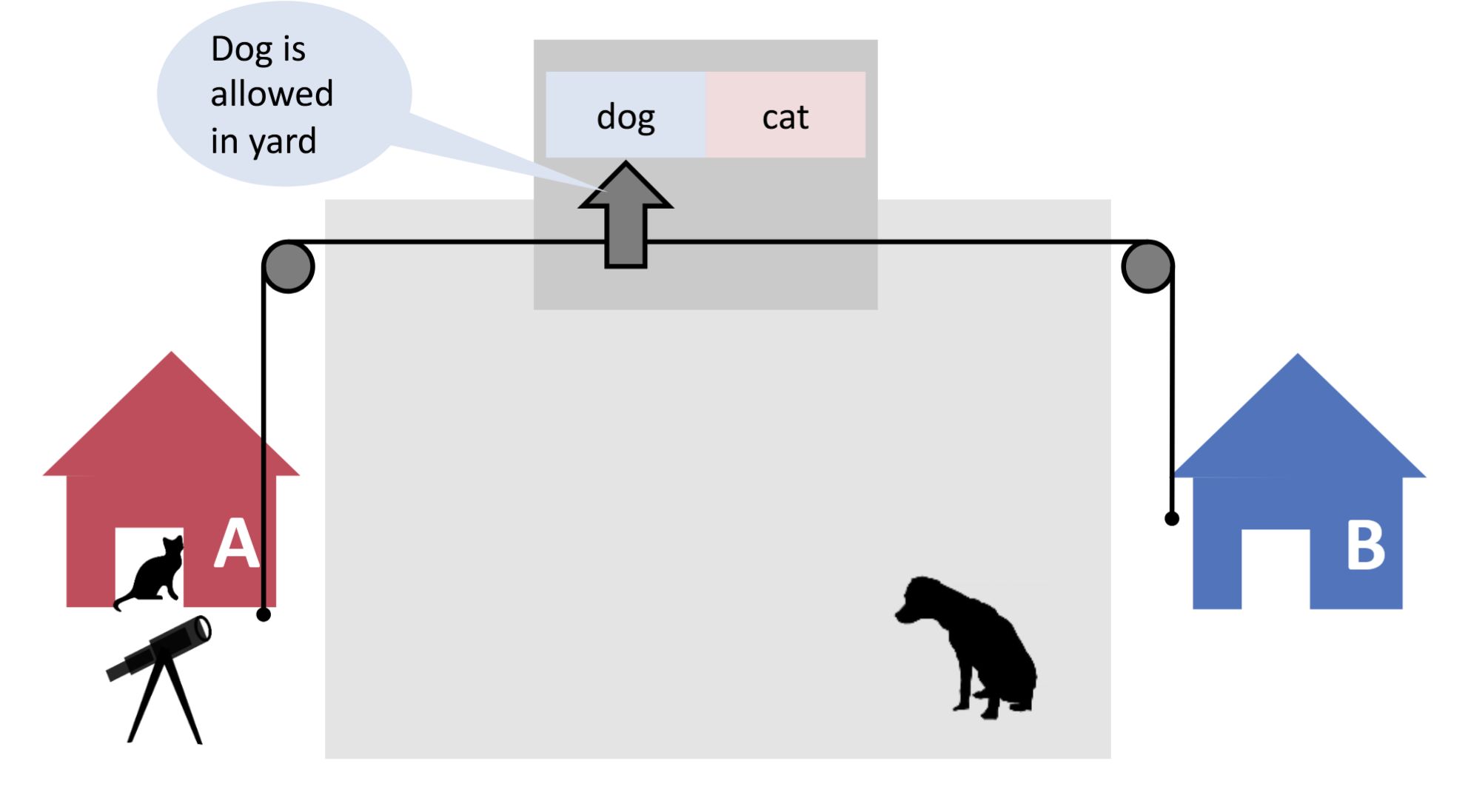

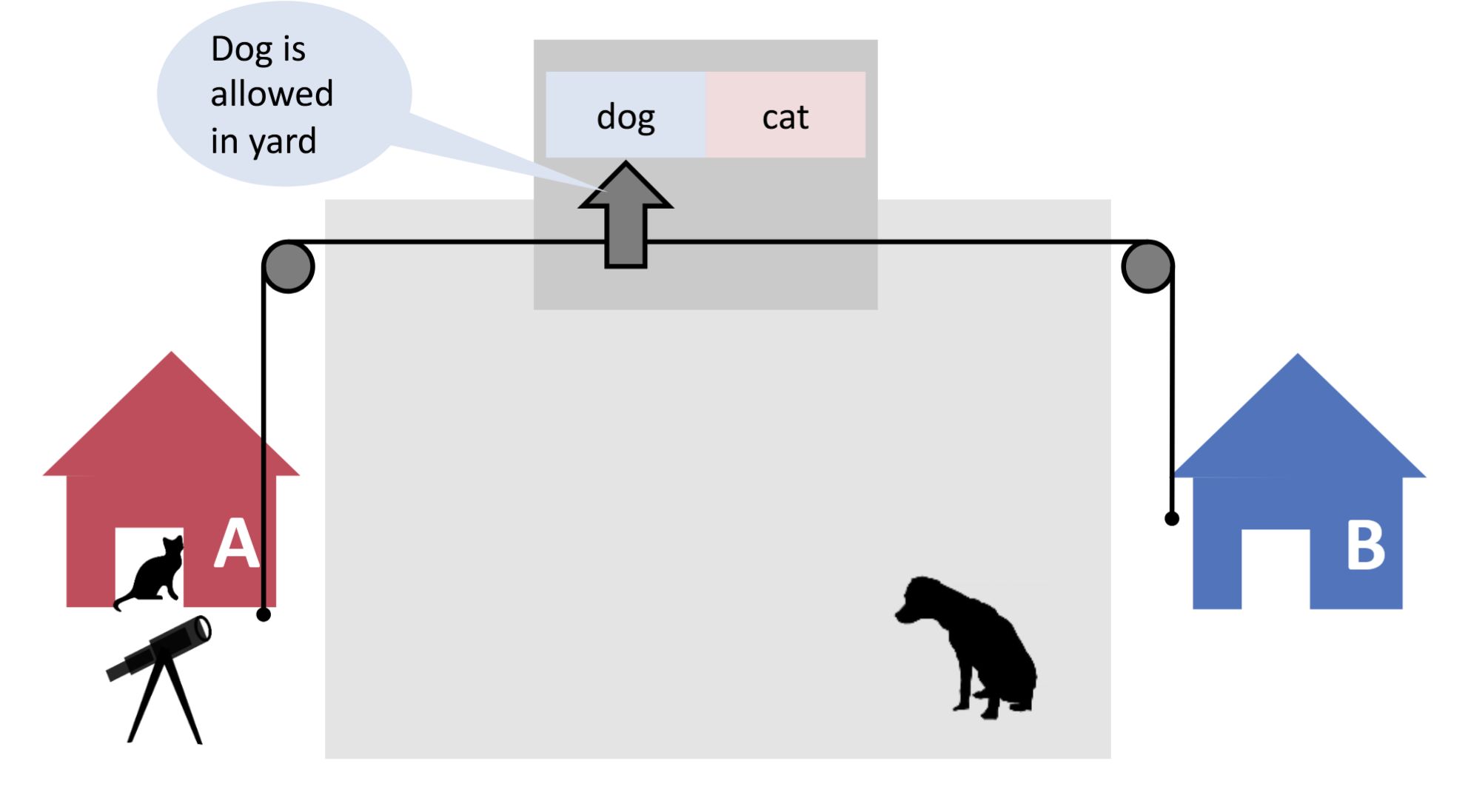

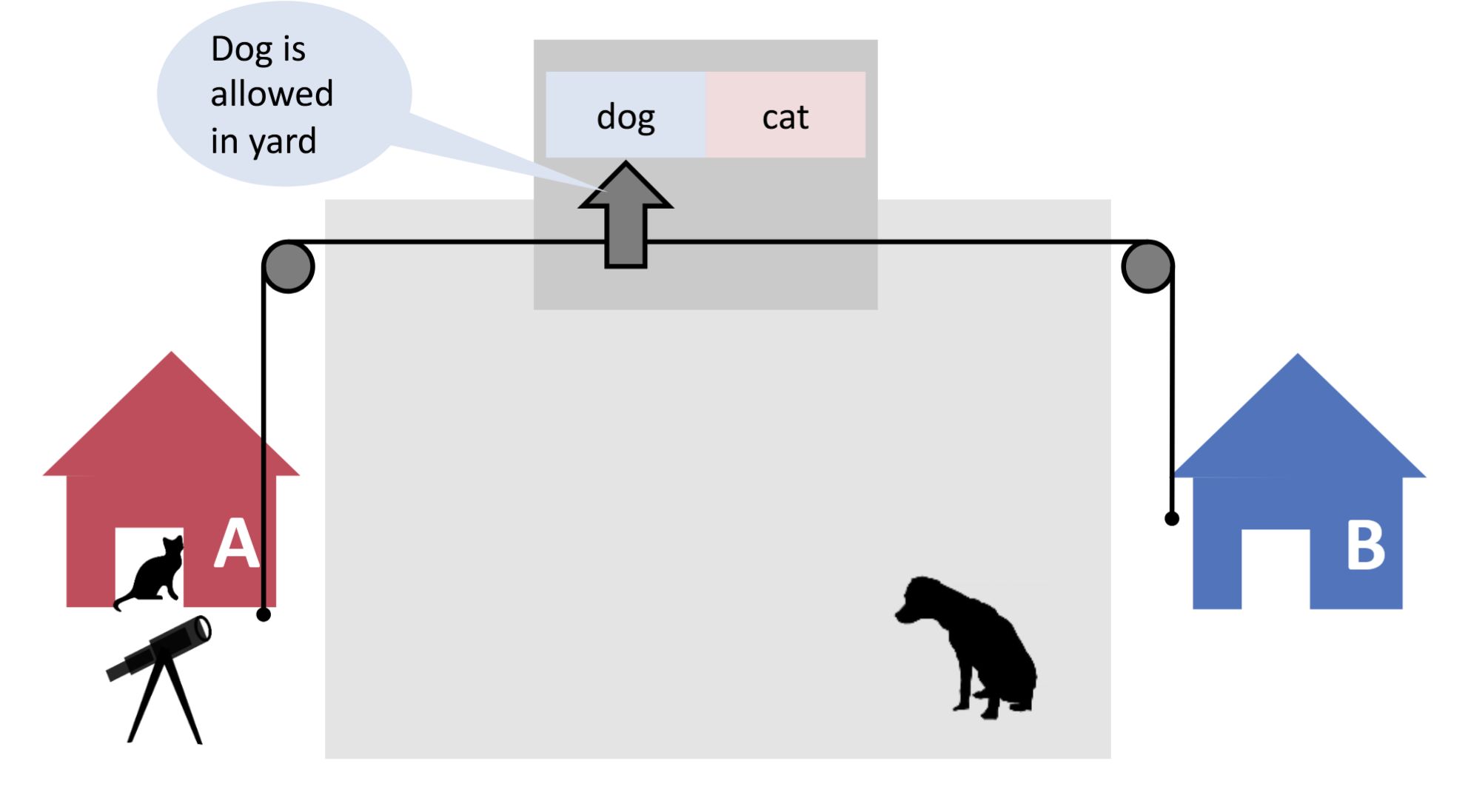

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

What's the problem with notification?

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

What's the problem with notification?

No mutual exclusion!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What's the problem with notification?<br><br><img src="paste-f2d8f3beeaf6f48f730430087921c3e21aa59aca.jpg"> |

|

| Back |

No mutual exclusion!<br><br><img src="paste-a3df6d640733f683122a81d29a0d1ccafc0371bf.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 23: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: K>ug`7HTL`

deleted

Deleted Note

Front

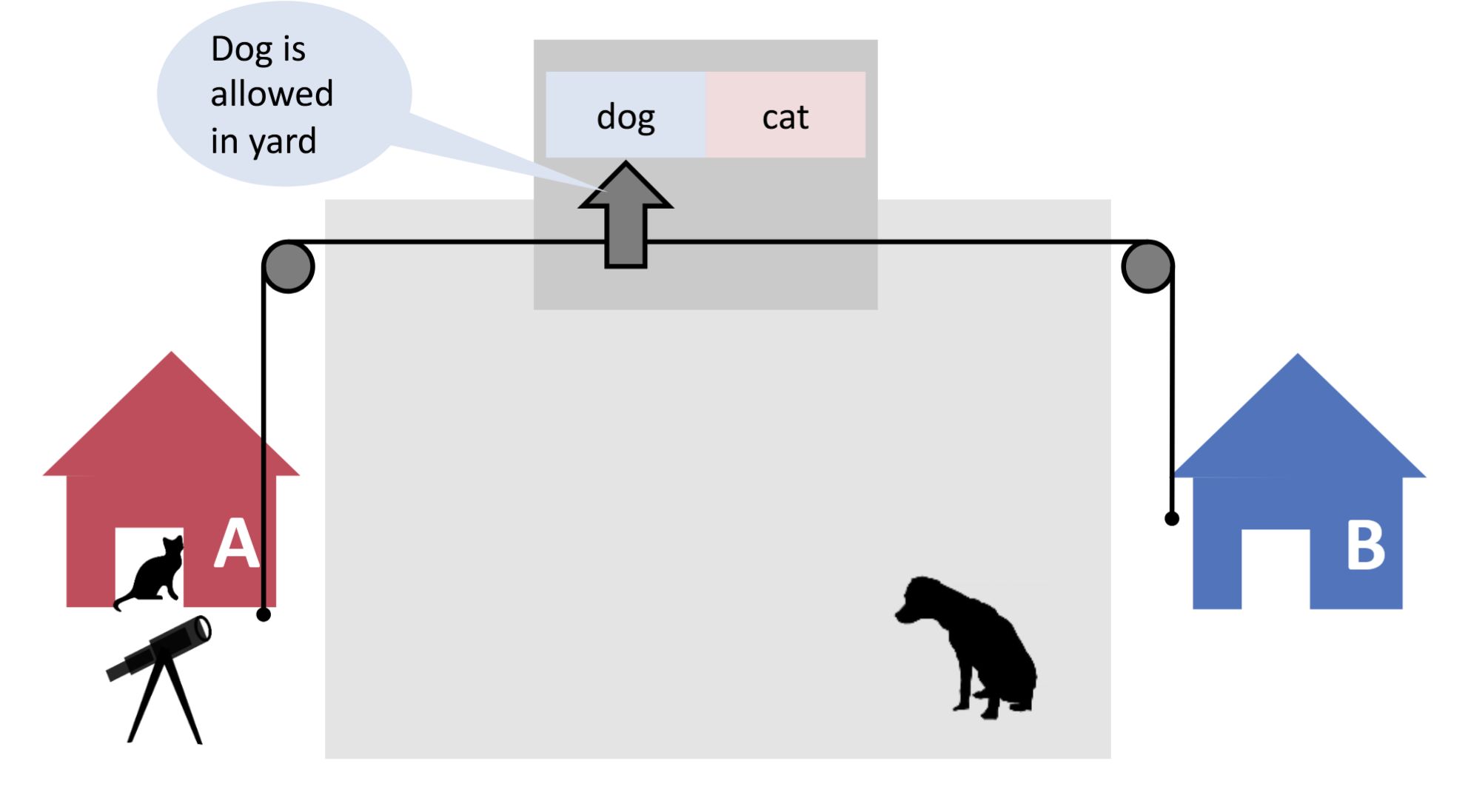

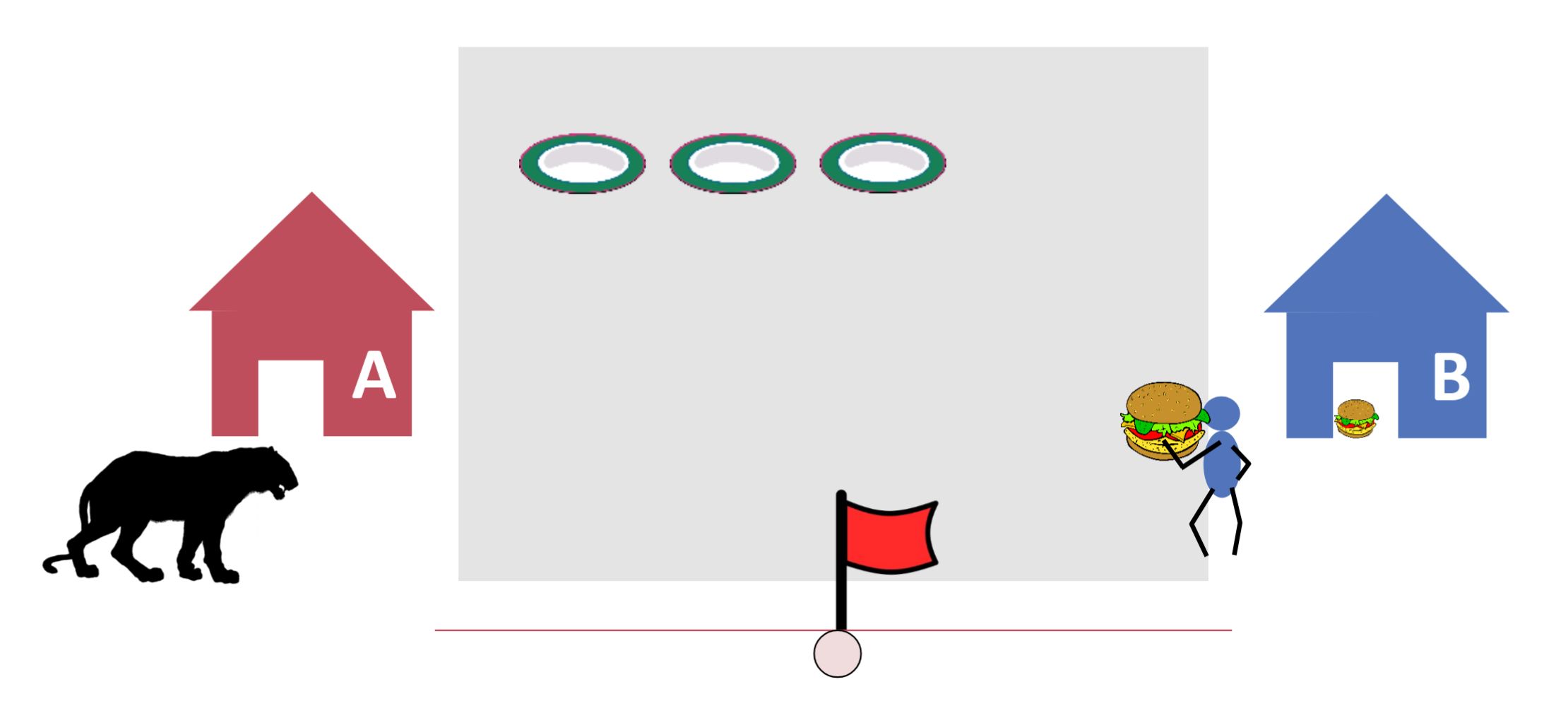

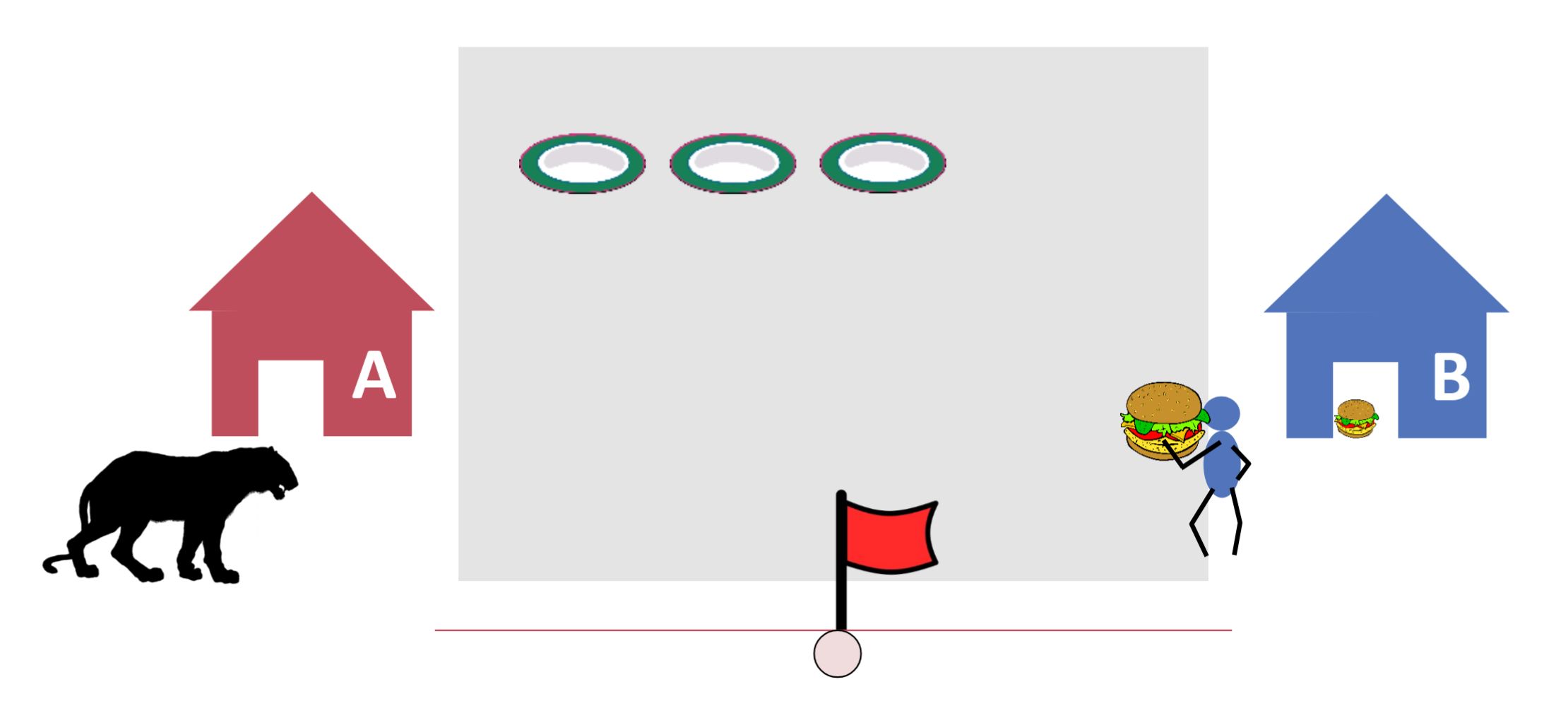

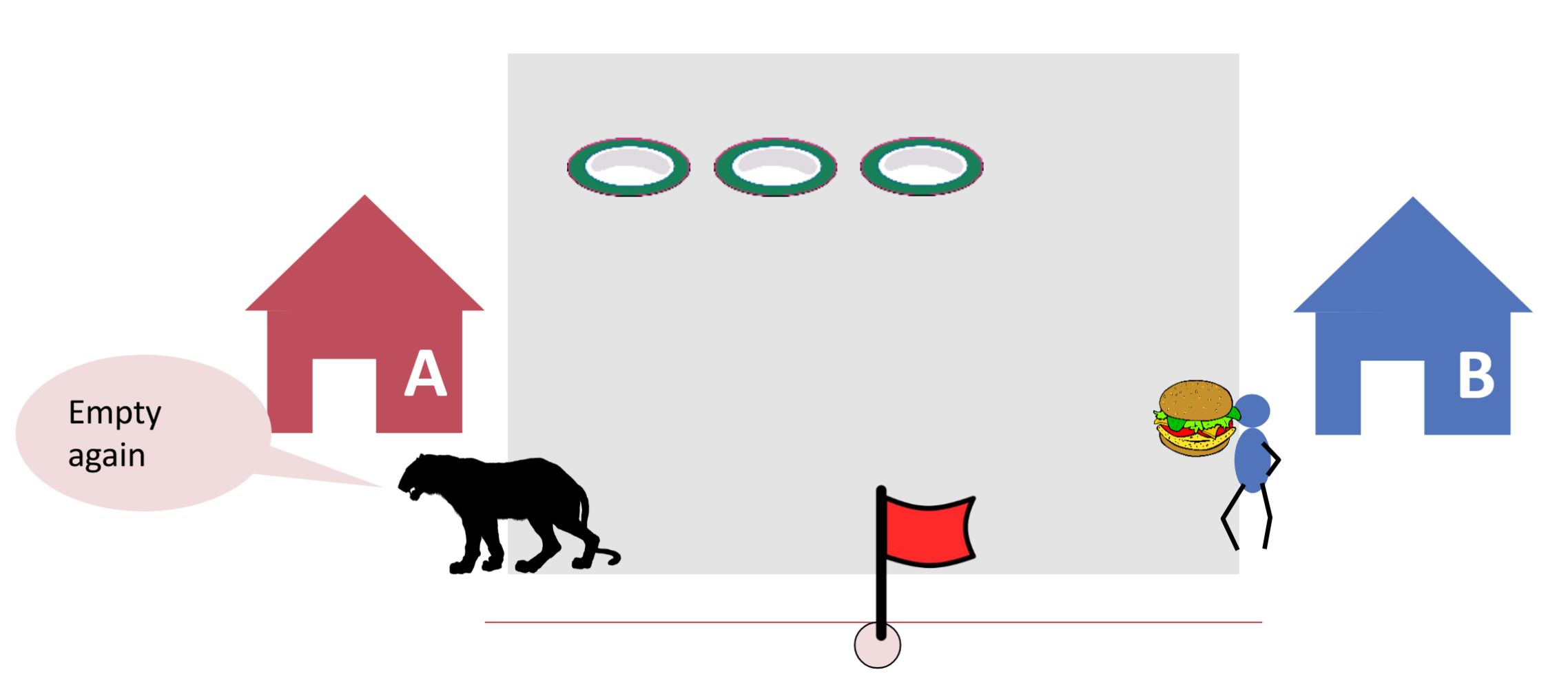

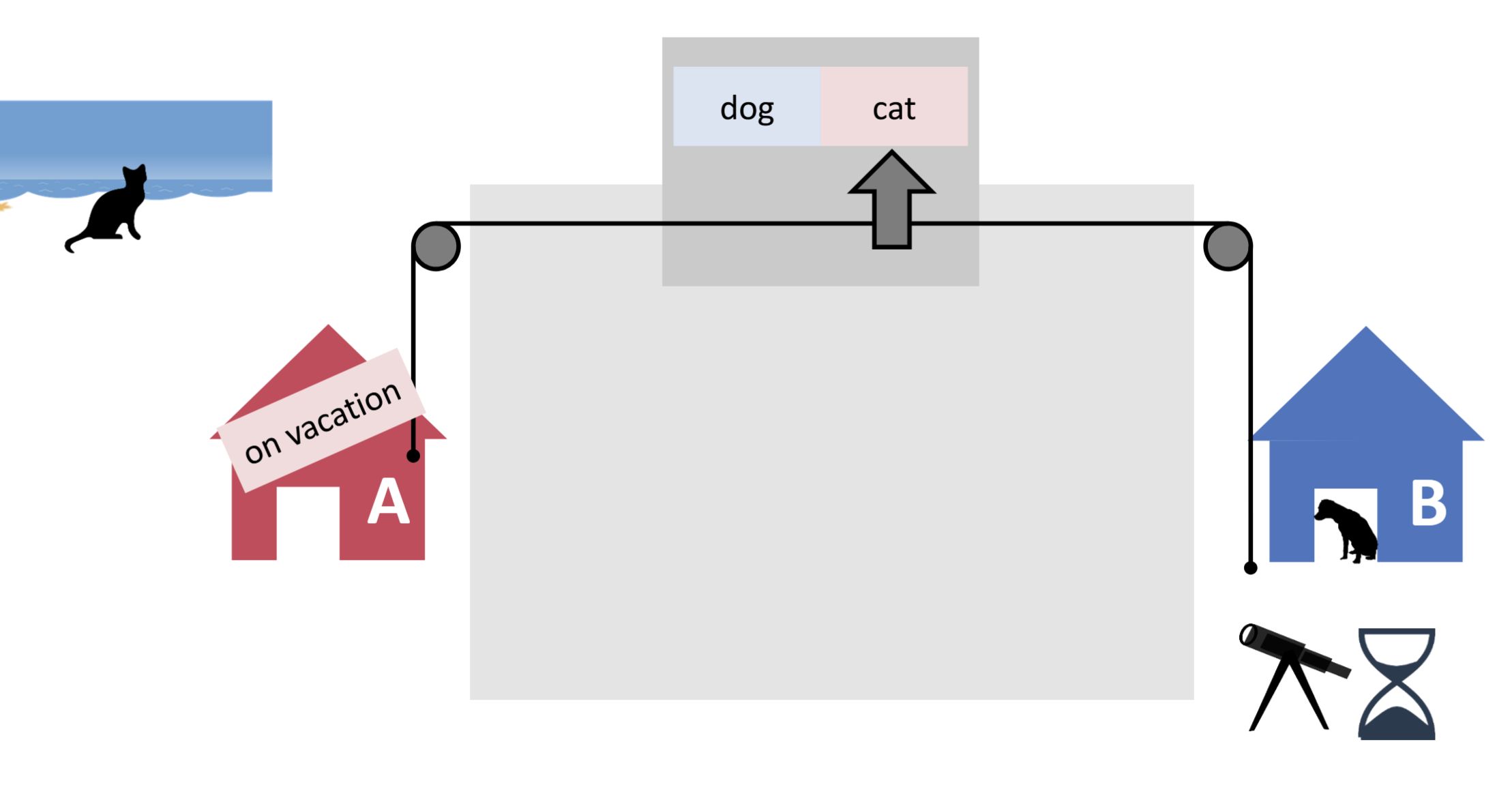

ETH::2._Semester::PProg::01._Introduction::3._Readers-Writers

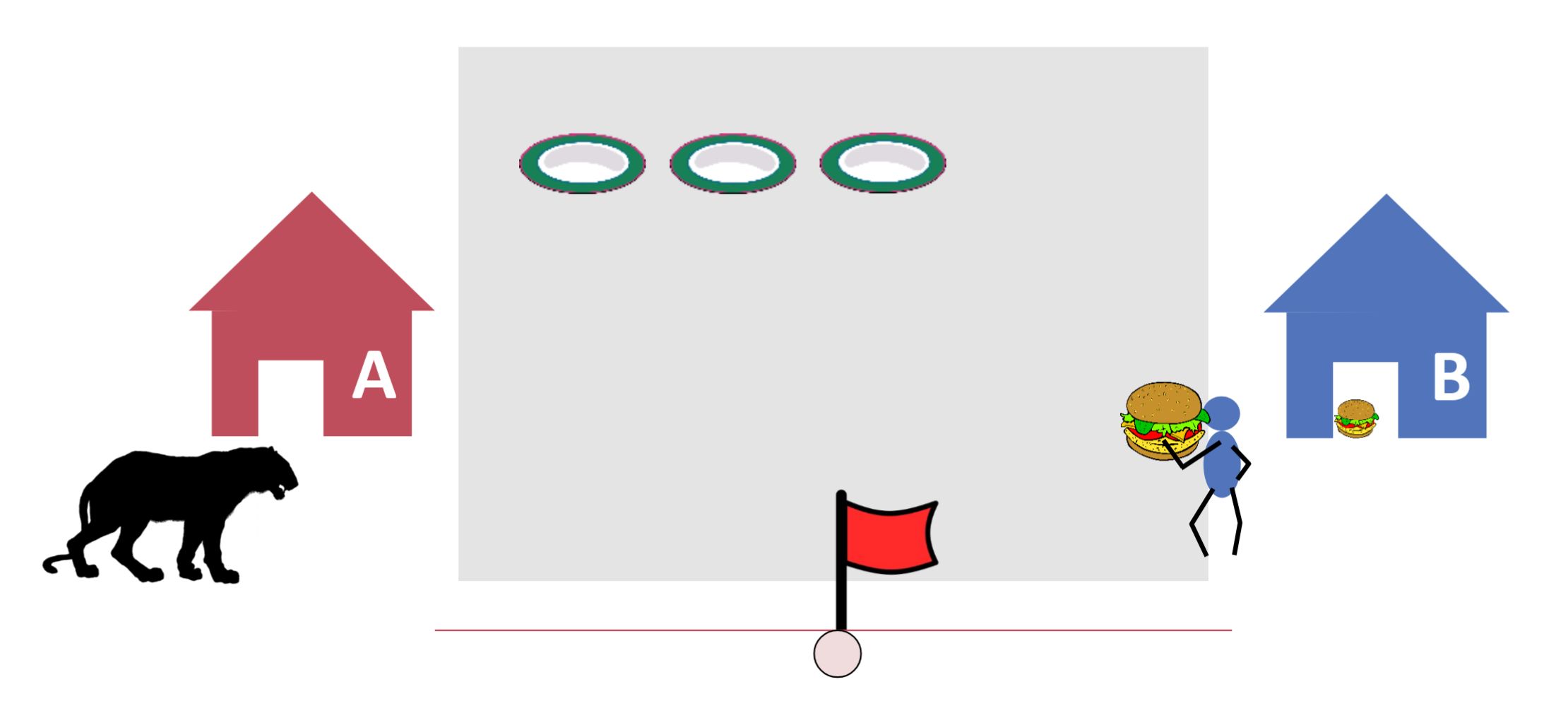

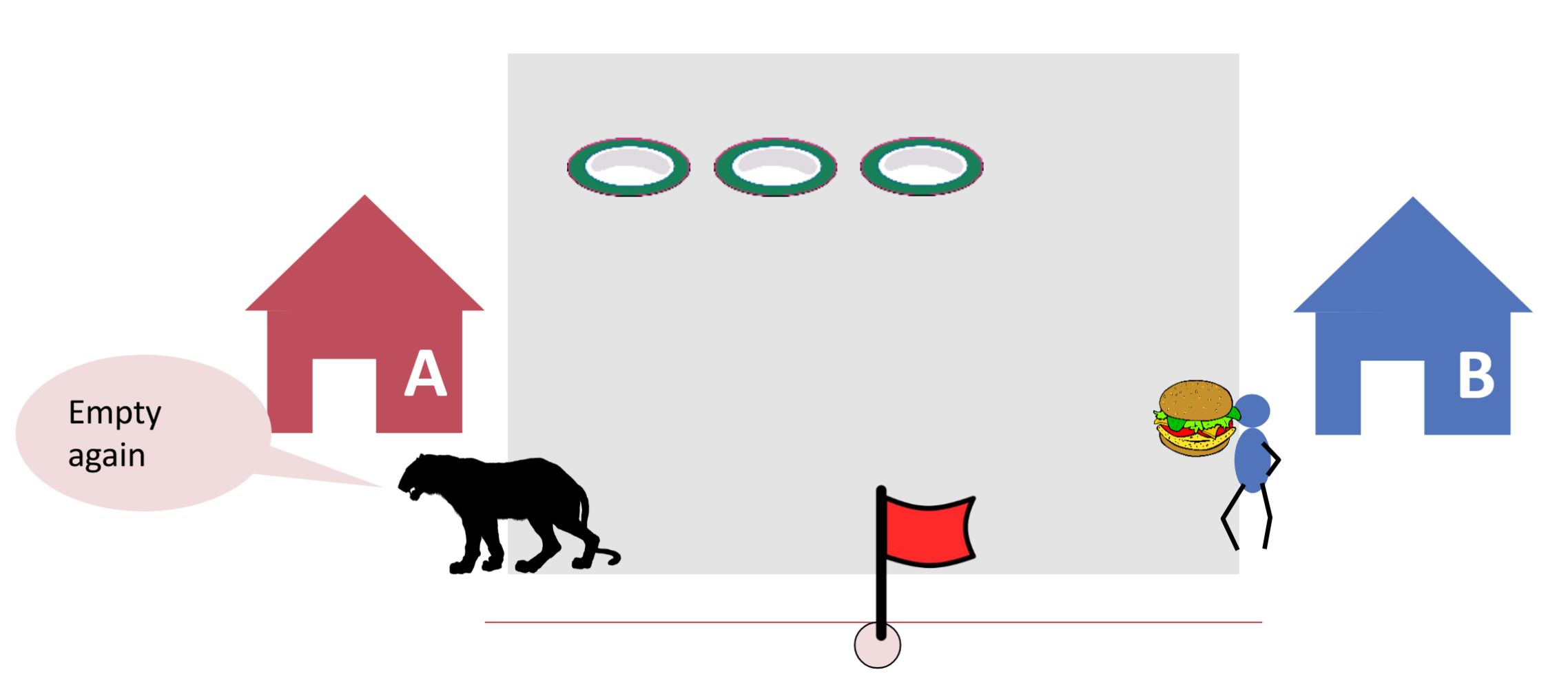

What's an issue of the Readers-Writers pattern?

Back

ETH::2._Semester::PProg::01._Introduction::3._Readers-Writers

What's an issue of the Readers-Writers pattern?

The reader may not be reading at all times, causing input loss!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What's an issue of the Readers-Writers pattern?<br><br><img src="paste-5b1101e8375a8f6016a7b86991131d792b56304f.jpg"> |

|

| Back |

The reader may not be reading at all times, causing input loss!<br><br><img src="paste-48656cf3c97513bbb32ce7e85601825b7d136132.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::3._Readers-Writers

Note 24: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: L#!rmjGpR]

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

\(T_p\) is the time required to perform work on {{c2::p processors}

Back

ETH::2._Semester::PProg::Terminology

\(T_p\) is the time required to perform work on {{c2::p processors}

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1:: \(T_p\)}} is the time required to perform work on {{c2::p processors} |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 25: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: L/^V2kJI?*

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Schedulers typically do not give guarantees about when and how often they act, who gets selected next, etc.

Back

ETH::2._Semester::PProg::Terminology

Schedulers typically do not give guarantees about when and how often they act, who gets selected next, etc.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Schedulers}} typically {{c2::do not give guarantees}} about {{c3::when and how often they act}}, {{c4::who gets selected next}}, etc. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 26: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: L;tWm+I&15

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A bad interleaving is an interleaving that yields a problematic or otherwise undesirable computation. E.g. an incorrect result, a deadlock or non-deterministic output.

Back

ETH::2._Semester::PProg::Terminology

A bad interleaving is an interleaving that yields a problematic or otherwise undesirable computation. E.g. an incorrect result, a deadlock or non-deterministic output.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::bad interleaving}} is an interleaving that yields {{c2::a problematic or otherwise undesirable computation}}. E.g. {{c3::an incorrect result, a deadlock or non-deterministic output}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 27: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: L~Q8Y|:``w

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A lock can be acquired/locked by a thread, and is then held until it is released/unlocked.

Back

ETH::2._Semester::PProg::Terminology

A lock can be acquired/locked by a thread, and is then held until it is released/unlocked.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A lock can be {{c1::acquired/locked}} by a thread, and is then held until it is {{c1::released/unlocked}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 28: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: MX>`Kg8*SU

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Which events can influence if a race condition happens or not? (Assuming it is already present in the code)

Back

ETH::2._Semester::PProg::Terminology

Which events can influence if a race condition happens or not? (Assuming it is already present in the code)

scheduler interactions can cause different interleavings

variable network latency

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

Which events can influence if a race condition happens or not? (Assuming it is already present in the code) |

|

| Back |

scheduler interactions can cause different interleavings<br>variable network latency |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 29: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: M`z+&R0ZMn

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

What's the problem with putting up flags first and then checking the neighbor?

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

What's the problem with putting up flags first and then checking the neighbor?

We still have livelock and starvation!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What's the problem with putting up flags first and then checking the neighbor?<br><br><img src="paste-dc4d0a04c8aa3beea684219d0fbb54e515ee9fa4.jpg"> |

|

| Back |

We still have livelock and starvation!<br><br><img src="paste-4ee017b283384b29010f1bb07f97648b18e38481.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 30: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: N-`{p4Lt3o

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction

Parallel execution can introduce inefficiencies such as communication overhead, load imbalance, and idle time due to task dependencies or waiting for data exchange.

Back

ETH::2._Semester::PProg::01._Introduction

Parallel execution can introduce inefficiencies such as communication overhead, load imbalance, and idle time due to task dependencies or waiting for data exchange.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Parallel execution can introduce inefficiencies such as {{c1::communication overhead}}, {{c2::load imbalance}}, and {{c3::idle time due to task dependencies or waiting for data exchange}}. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

Note 31: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: NAGc`DO5VT

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Work in a task graph is denoted T_1 and equals the sum of the cost of all nodes in the graph.

Back

ETH::2._Semester::PProg::Terminology

Work in a task graph is denoted T_1 and equals the sum of the cost of all nodes in the graph.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Work}} in a task graph is denoted {{c2::T_1}} and equals the {{c3::sum of the cost of all nodes}} in the graph. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 32: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: NAqmdxn~W[

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction

Managing shared resources in parallel programming introduces challenges such as race conditions, synchronization, deadlock, livelock etc.

Back

ETH::2._Semester::PProg::01._Introduction

Managing shared resources in parallel programming introduces challenges such as race conditions, synchronization, deadlock, livelock etc.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Managing shared resources in parallel programming introduces challenges such as {{c1::race conditions, synchronization, deadlock, livelock etc}}. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

Note 33: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: O&WdPAf/N%

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Deadlock is circular waiting/blocking (no instructions are executed/CPU time is used) between threads, so that the system (union of all threads) cannot make any progress anymore.

Back

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Deadlock is circular waiting/blocking (no instructions are executed/CPU time is used) between threads, so that the system (union of all threads) cannot make any progress anymore.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Deadlock}} is {{c2::circular waiting/blocking (no instructions are executed/CPU time is used) between threads, so that the system (union of all threads) cannot make any progress anymore}}. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

ETH::2._Semester::PProg::Terminology

Note 34: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: O?>[neGb1v

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

When decomposing work into tasks using divide and conquer, a sequential cutoff means stop splitting at a certain problem size.

Back

ETH::2._Semester::PProg::Terminology

When decomposing work into tasks using divide and conquer, a sequential cutoff means stop splitting at a certain problem size.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

When decomposing work into tasks using divide and conquer, a {{c1::sequential cutoff}} means {{c2::stop splitting at a certain problem size}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 35: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: OQfr*wOjfT

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A thread starves if it can never enter a/any critical section.

Back

ETH::2._Semester::PProg::Terminology

A thread starves if it can never enter a/any critical section.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A thread {{c1::starves}} if it can {{c2::never enter a/any critical section}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 36: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

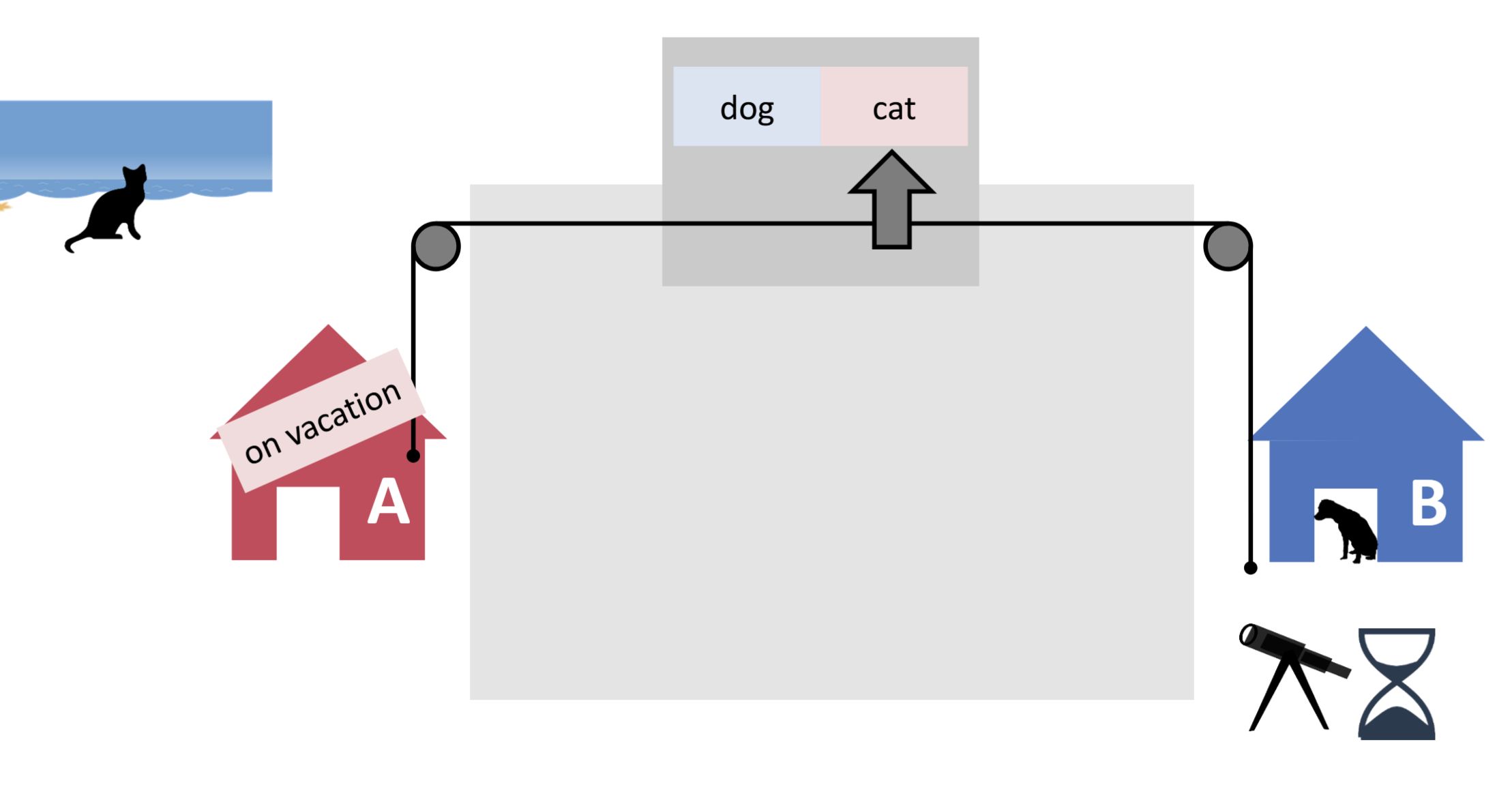

GUID: P%}^}]&H%f

deleted

Deleted Note

Front

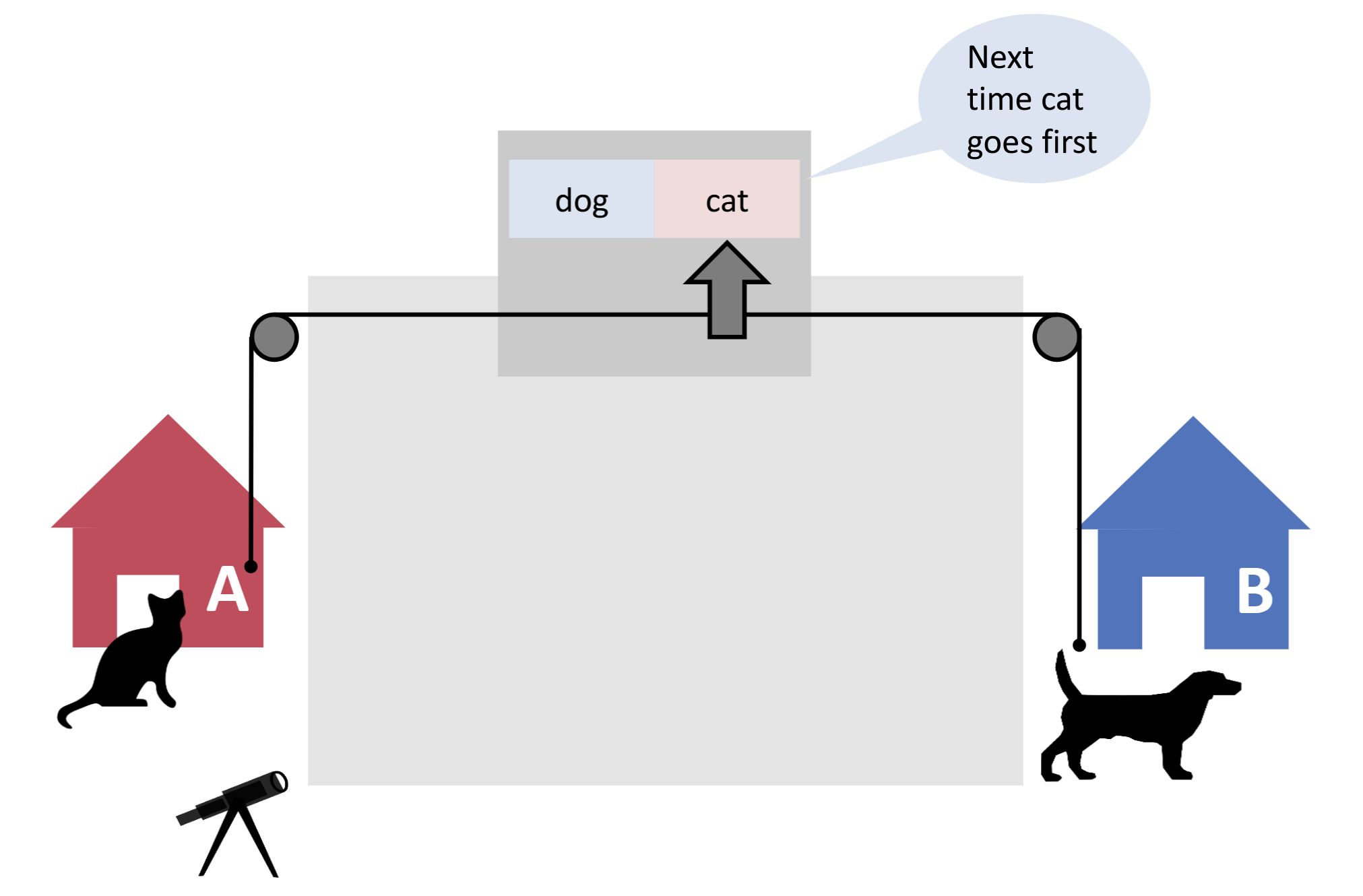

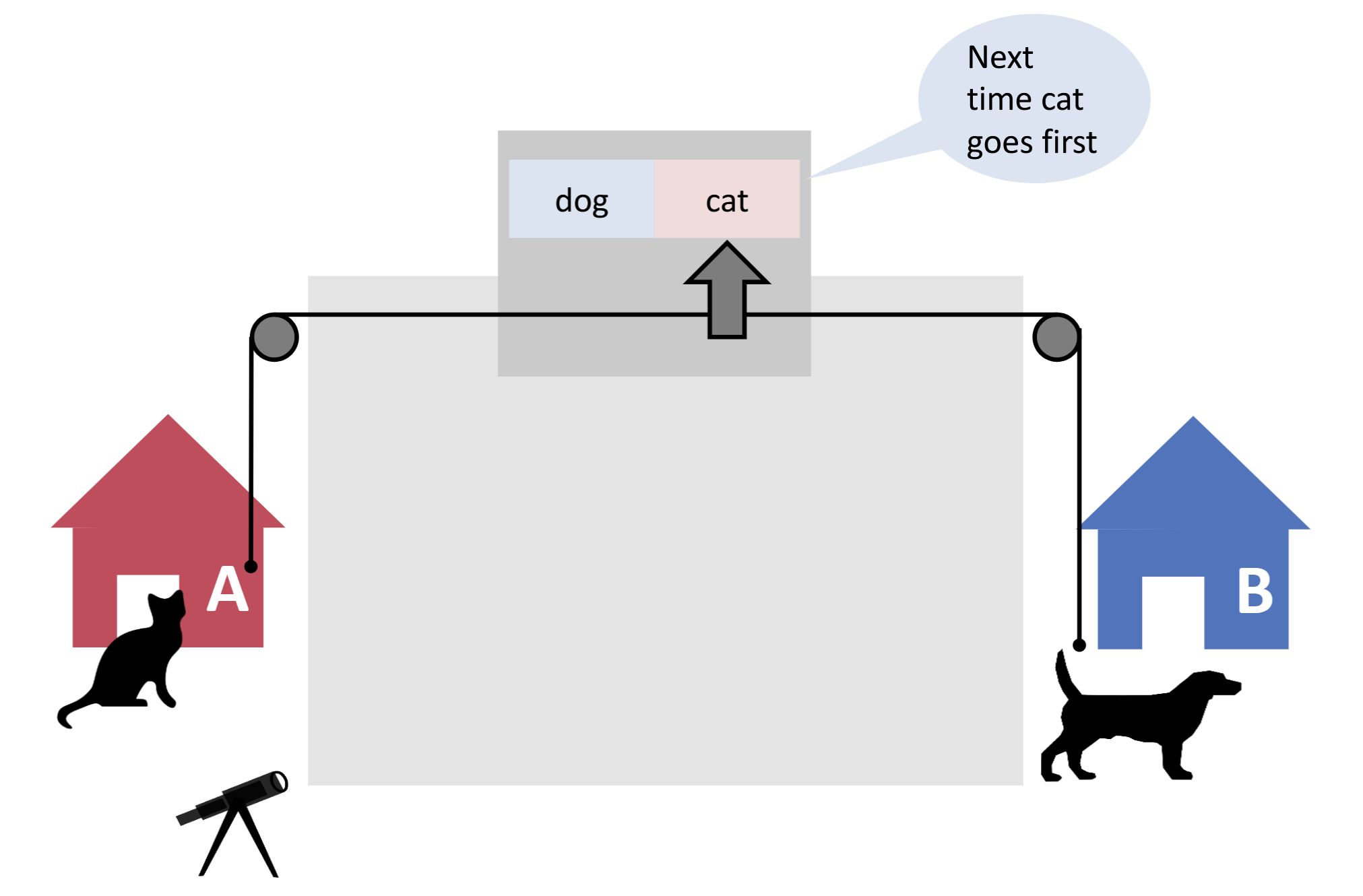

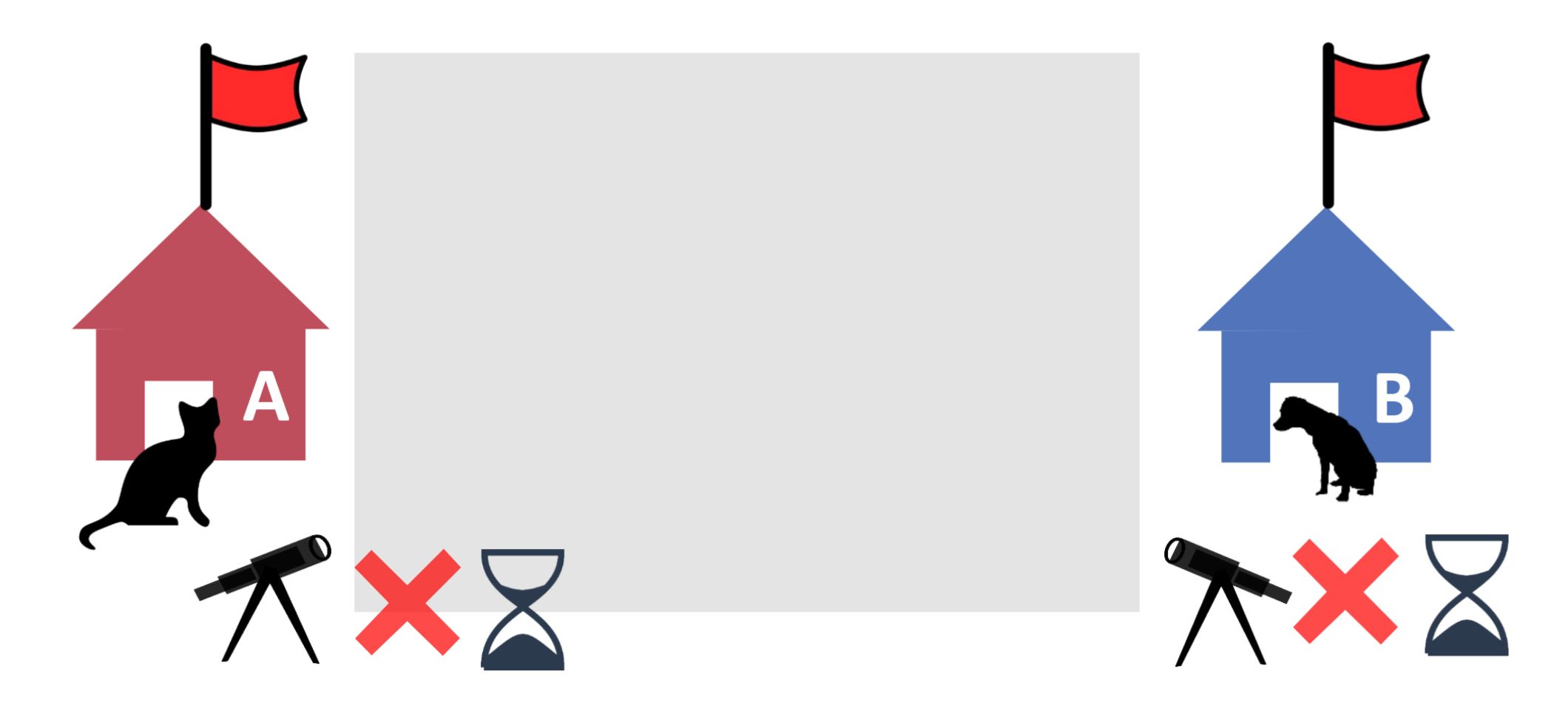

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

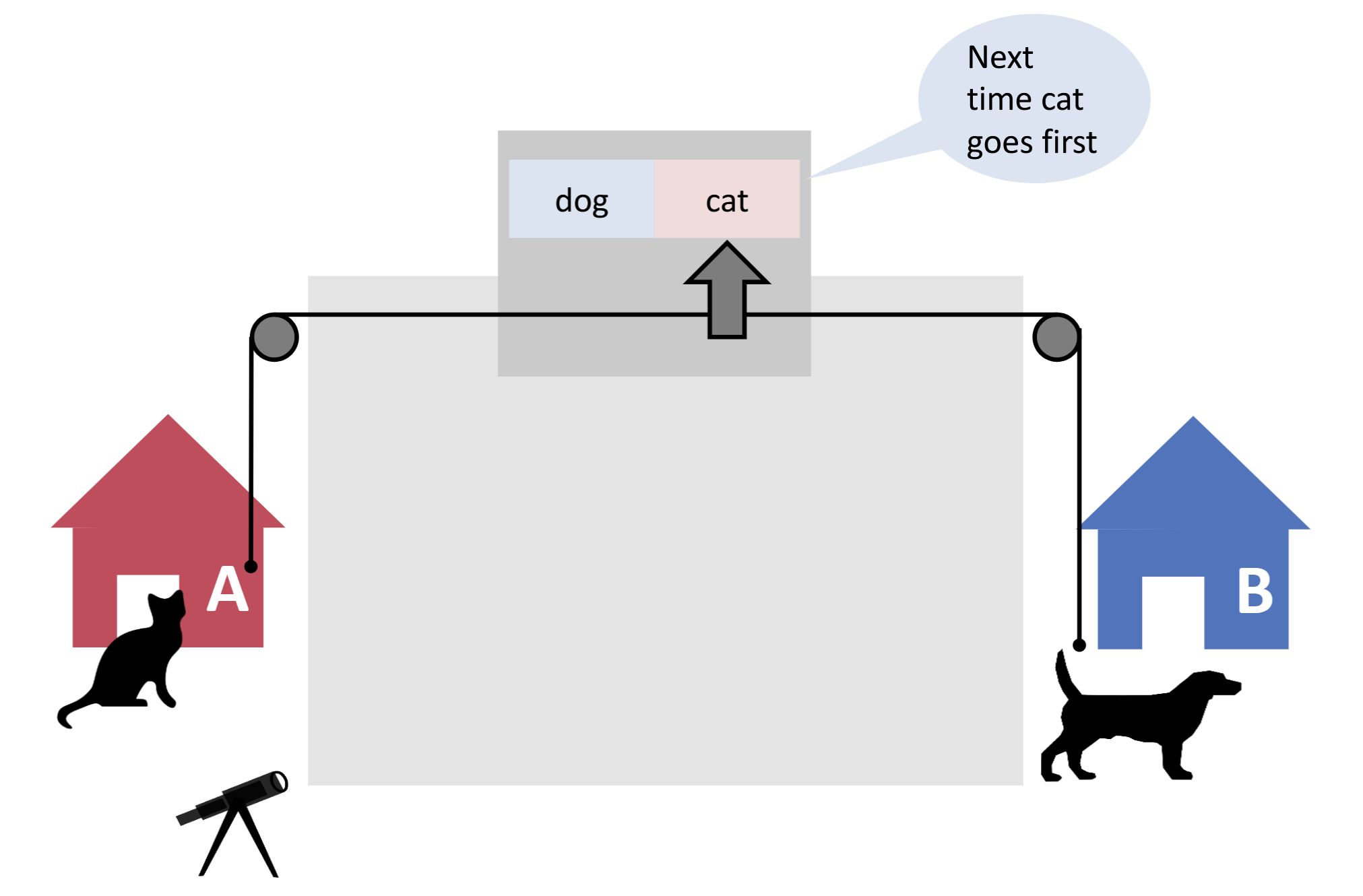

How can we solve starvation in the cat and dog problem?

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

How can we solve starvation in the cat and dog problem?

Combine flags with a turn-based determinator!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

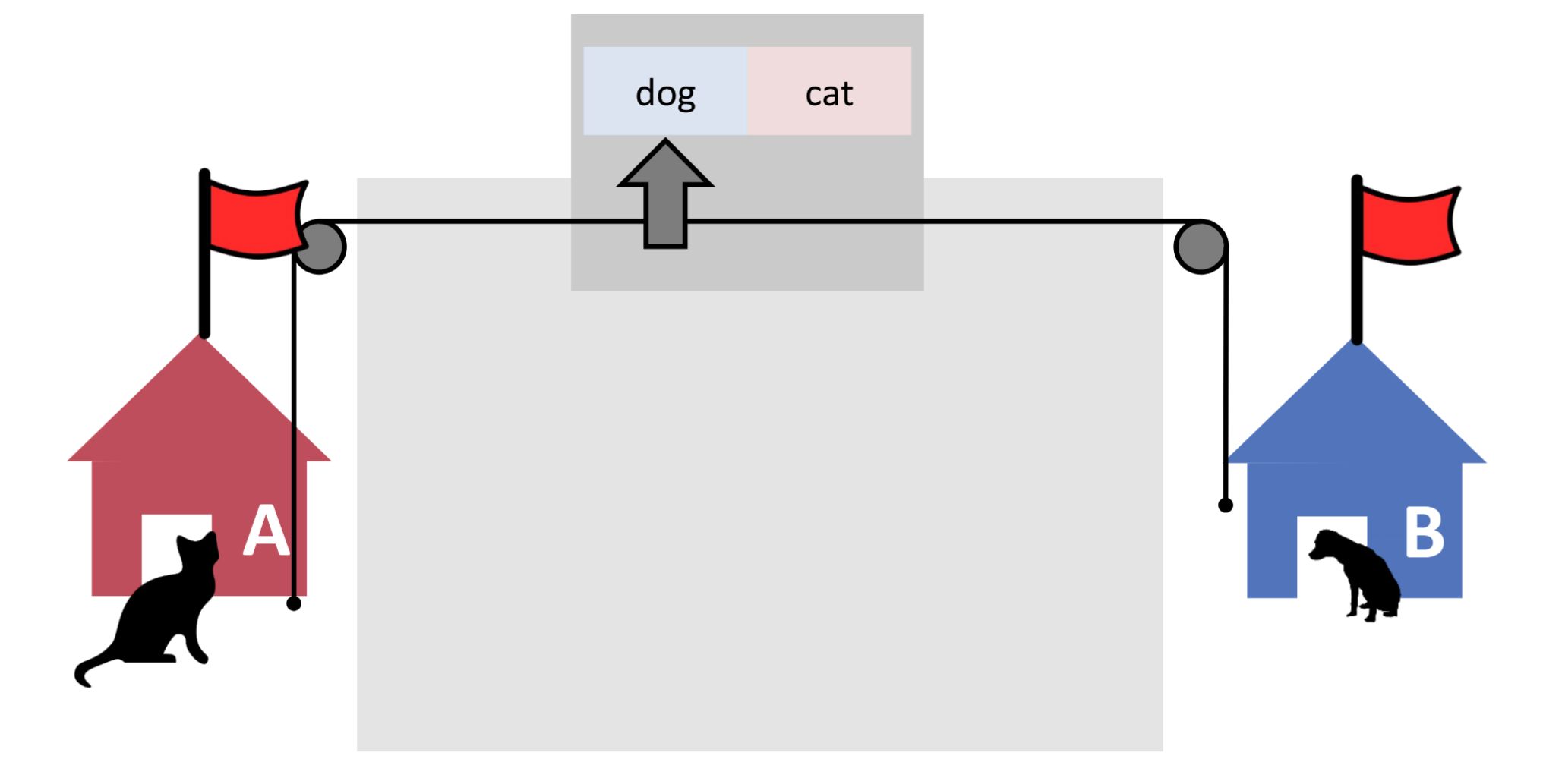

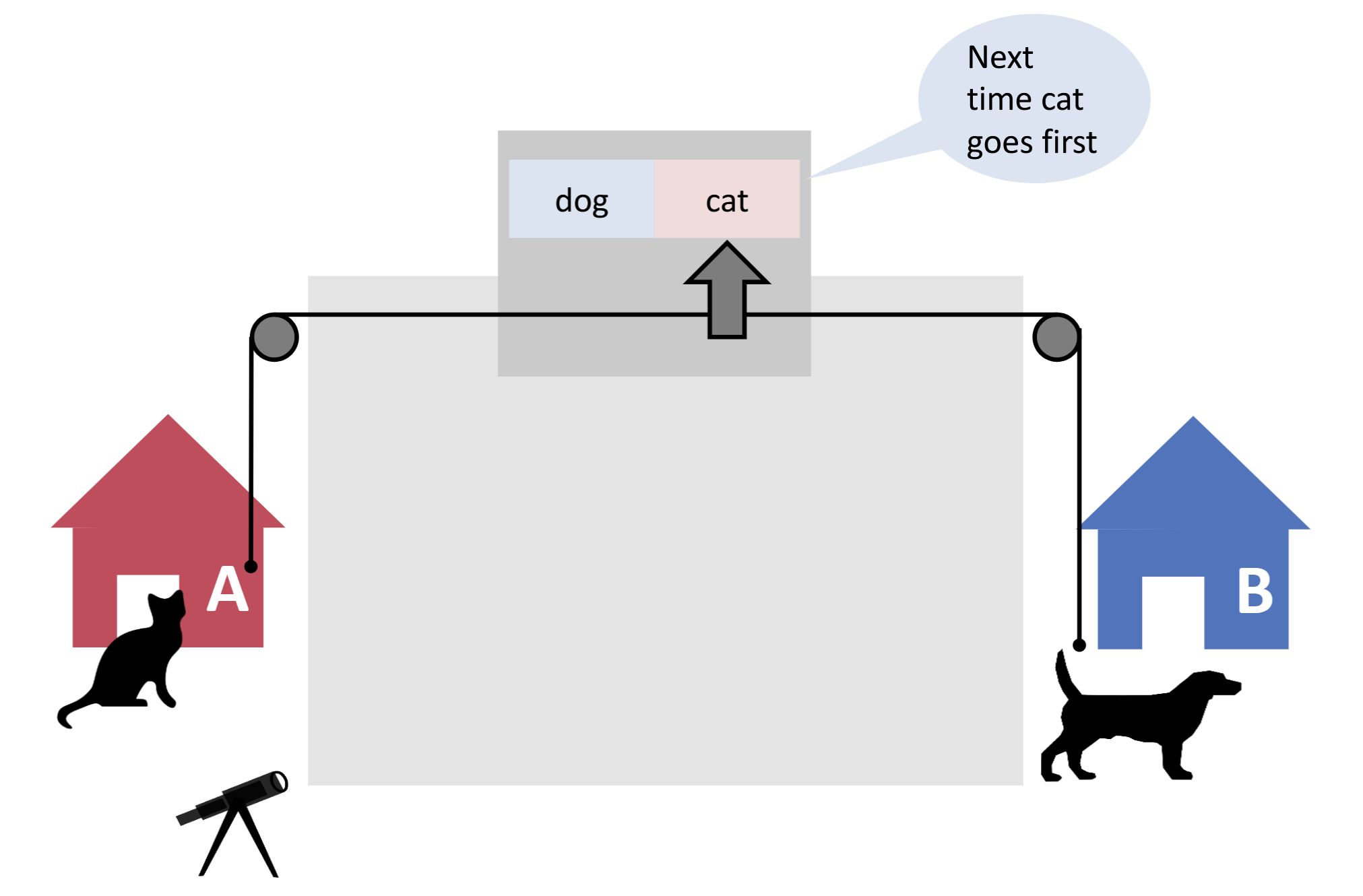

| Front |

How can we solve starvation in the cat and dog problem? |

|

| Back |

Combine flags with a turn-based determinator!<br><br><img src="paste-a9b6f4d9dcd618ad0a26b4482f5356d49d20cd09.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 37: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: PL8n[tJah;

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

In order to perform optimally, the JVM often needs warm-up time to 'learn' what kind of code is typically being executed.

Back

ETH::2._Semester::PProg::Terminology

In order to perform optimally, the JVM often needs warm-up time to 'learn' what kind of code is typically being executed.

This applies especially to the ForkJoin framework, which needs some time to optimally distribute tasks on threads.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

In order to perform optimally, the JVM often needs {{c1::warm-up}} time to {{c2::'learn' what kind of code is typically being executed}}. |

|

| Extra |

This applies especially to the ForkJoin framework, which needs some time to optimally distribute tasks on threads. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 38: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: Qj|fR)B

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Latency is an evaluation metric for pipelines that measures the time a pipeline needs to process a given work item.

Back

ETH::2._Semester::PProg::Terminology

Latency is an evaluation metric for pipelines that measures the time a pipeline needs to process a given work item.

e.g. a CPU instruction

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Latency}} is an evaluation metric for pipelines that measures {{c2::the time a pipeline needs to process a given work item}}. |

|

| Extra |

e.g. a CPU instruction |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 39: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: bD]7})PU$-

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Parallelism means doing multiple things at the same time.

Back

ETH::2._Semester::PProg::01._Introduction ETH::2._Semester::PProg::Terminology

Parallelism means doing multiple things at the same time.

(As opposed to concurrency: dealing with multiple things at the same time).

Performing computations simultaneously; either actually, if sufficient computation units are available, or virtually, via some form of alternation.

Often used interchangeably with concurrency.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Parallelism}} means {{c2::doing multiple things at the same time}}. |

|

| Extra |

(As opposed to concurrency: dealing with multiple things at the same time). <br><br>Performing computations simultaneously; either actually, if sufficient computation units are available, or virtually, via some form of alternation. <br><br>Often used interchangeably with concurrency. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

ETH::2._Semester::PProg::Terminology

Note 40: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: bI(*ZHmWA6

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

In terms of context switch, CPU needs to store/save the local data, program pointer etc. of the current thread/process, and load the local data, program pointer etc. of the next thread/process to execute.

Back

ETH::2._Semester::PProg::Terminology

In terms of context switch, CPU needs to store/save the local data, program pointer etc. of the current thread/process, and load the local data, program pointer etc. of the next thread/process to execute.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

In terms of context switch, CPU needs to {{c1::store/save the local data, program pointer etc. of the current thread/process}}, and {{c2::load the local data, program pointer etc. of the next thread/process to execute}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 41: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: buqWa{Q2-l

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

What is the tradeoff between busy-waiting and blocking?

Back

ETH::2._Semester::PProg::Terminology

What is the tradeoff between busy-waiting and blocking?

busy waiting uses up CPU time, whereas blocking may cause additional context switches

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What is the tradeoff between busy-waiting and blocking? |

|

| Back |

busy waiting uses up CPU time, whereas blocking may cause additional context switches |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 42: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: b{kg%0Ane{

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Work partitioning is the split-up of a program into smaller tasks that can be executed in parallel.

Back

ETH::2._Semester::PProg::Terminology

Work partitioning is the split-up of a program into smaller tasks that can be executed in parallel.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Work partitioning}} is the {{c2::split-up of a program}} into smaller tasks that can be executed in {{c3::parallel}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 43: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: b~/=`@M)vU

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

In green threading, the JVM maps several threads to a single operating system thread.

Back

ETH::2._Semester::PProg::Terminology

In green threading, the JVM maps several threads to a single operating system thread.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

In {{c1::green threading}}, the JVM maps {{c2::several threads to a single operating system thread}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 44: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: d?(F&:%xxs

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

synchronized is a Java keyword, enforcing mutual exclusion for a critical section via some object's intrinsic lock.

Back

ETH::2._Semester::PProg::Terminology

synchronized is a Java keyword, enforcing mutual exclusion for a critical section via some object's intrinsic lock.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::synchronized}} is a Java keyword, enforcing {{c2::mutual exclusion}} for a critical section via some object's {{c3::intrinsic lock}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 45: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: e@.#qnN#(e

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Multiprocessing (or multitasking) is the concurrent execution of multiple tasks/processes, typically referring to parallelism on the operating system level.

Back

ETH::2._Semester::PProg::Terminology

Multiprocessing (or multitasking) is the concurrent execution of multiple tasks/processes, typically referring to parallelism on the operating system level.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Multiprocessing}} (or {{c2::multitasking}}) is the concurrent execution of {{c3::multiple tasks/processes}}, typically referring to parallelism on the {{c4::operating system level}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 46: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: eIYu#!KzX_

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction

\({{c1::\text{speedup (using p processors)} }} = \frac{ {{c2::\text{execution time (using 1 processor)} }} }{ {{c3::\text{execution time (using p processors)} }} }\)

Back

ETH::2._Semester::PProg::01._Introduction

\({{c1::\text{speedup (using p processors)} }} = \frac{ {{c2::\text{execution time (using 1 processor)} }} }{ {{c3::\text{execution time (using p processors)} }} }\)

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

\({{c1::\text{speedup (using p processors)} }} = \frac{ {{c2::\text{execution time (using 1 processor)} }} }{ {{c3::\text{execution time (using p processors)} }} }\) |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

Note 47: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: e]#wx>XL,0

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Thread mapping describes how a Java/JVM thread is related to an operating system thread.

Back

ETH::2._Semester::PProg::Terminology

Thread mapping describes how a Java/JVM thread is related to an operating system thread.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Thread mapping}} describes how a Java/JVM thread is related to an operating system thread. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 48: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: fcsPFI,=e0

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Locality has several meanings in parallel programming:

- Locally reason about one thread at a time (thread modularity) - simplifies correctness arguments.

- Data locality: related memory locations are accessed shortly after each other - improves cache usage

- Code locality: straight-line code increases opportunities for instruction level parallelism.

Back

ETH::2._Semester::PProg::Terminology

Locality has several meanings in parallel programming:

- Locally reason about one thread at a time (thread modularity) - simplifies correctness arguments.

- Data locality: related memory locations are accessed shortly after each other - improves cache usage

- Code locality: straight-line code increases opportunities for instruction level parallelism.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Locality}} has several meanings in parallel programming: <br><br><ol><li>{{c2::Locally reason about one thread at a time}} (thread modularity) - simplifies correctness arguments.</li><li>{{c3::Data locality}}: related memory locations are accessed shortly after each other - improves cache usage</li><li>{{c4::Code locality}}: straight-line code increases opportunities for instruction level parallelism.</li></ol><br> |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 49: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: fn|<.%5[Cr

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A liveness property is a property of a system: "something good eventually happens". Can only be violated in infinite time. Infinite loops and starvation are typical liveness properties.

Back

ETH::2._Semester::PProg::Terminology

A liveness property is a property of a system: "something good eventually happens". Can only be violated in infinite time. Infinite loops and starvation are typical liveness properties.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::liveness property}} is a property of a system: {{c2::"something good eventually happens"}}. Can only be violated in {{c3::infinite time}}. {{c4::Infinite loops and starvation}} are typical {{c1:: liveness properties}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 50: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: g+Va^lhr7q

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

The scheduler interrupts/pauses/sends to sleep the currently running process (or thread), performs a context switch, and selects the next process (or thread) to run.

Back

ETH::2._Semester::PProg::Terminology

The scheduler interrupts/pauses/sends to sleep the currently running process (or thread), performs a context switch, and selects the next process (or thread) to run.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

The scheduler {{c1::interrupts/pauses/sends to sleep the currently running process (or thread)}}, {{c2::performs a context switch}}, and {{c3::selects the next process (or thread) to run}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 51: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: g9lF(k!=m>

deleted

Deleted Note

Front

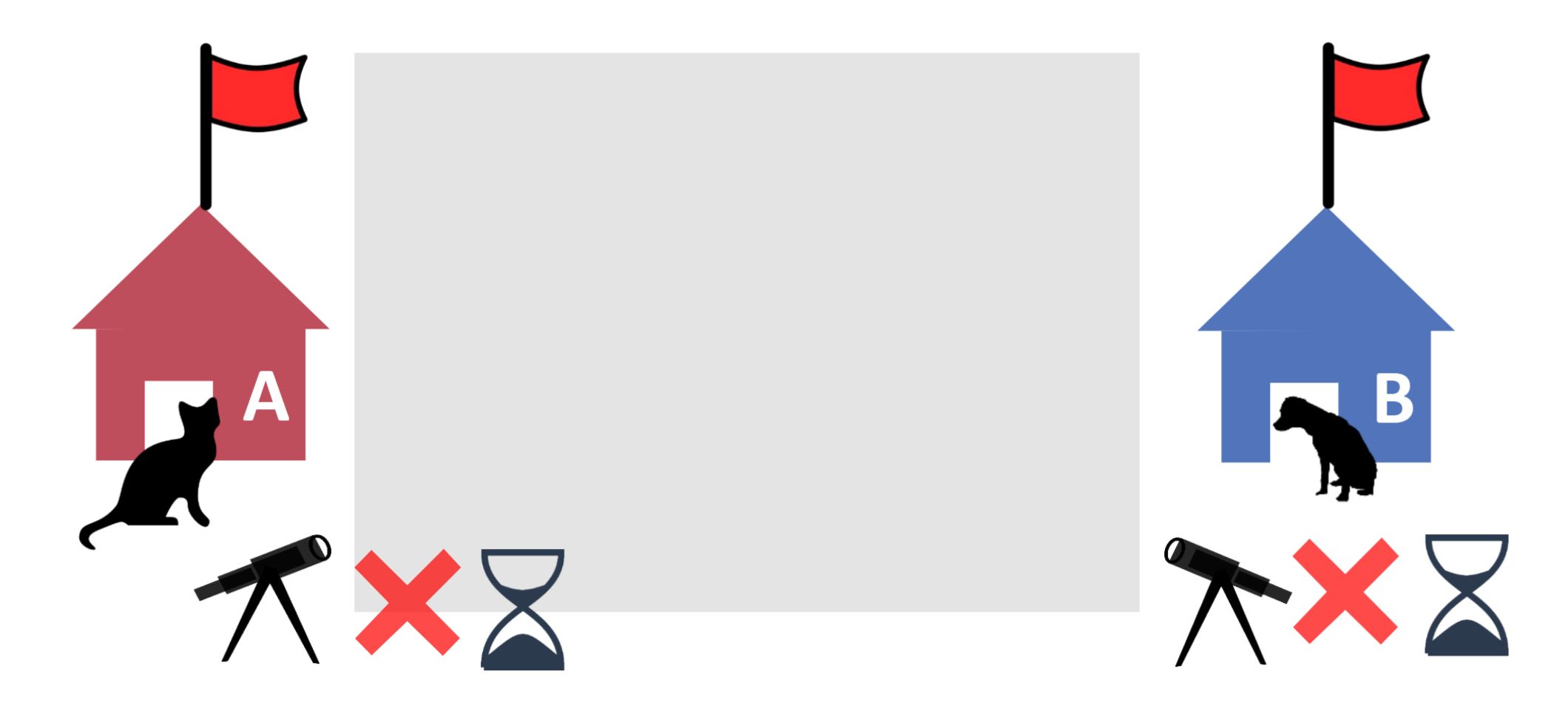

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Which problem remains here?

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Which problem remains here?

We still have the general problem of waiting!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

Which problem remains here?<br><br><img src="paste-6e904e009756ec14d14e931788f4b772eea35049.jpg"> |

|

| Back |

We still have the general problem of waiting!<br><br><img src="paste-bdfff43e8373bcf39038c2c2d9a30b7aa110d962.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 52: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: g?g2.P+F4z

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction::2._Producer-Consumer

What's one caveat of the Producer-Consumer pattern?

Back

ETH::2._Semester::PProg::01._Introduction::2._Producer-Consumer

What's one caveat of the Producer-Consumer pattern?

The order is not guaranteed!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What's one caveat of the Producer-Consumer pattern?<br><br><img src="paste-4aead4902f587a2bf7b8cbffb3972fca3d3a596b.jpg"> |

|

| Back |

The order is not guaranteed!<br><br><img src="paste-dbec8fb5ba0fa1863e35ee06d39eb8a6b41c6cd8.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::2._Producer-Consumer

Note 53: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: gX9o]qJfQD

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Code can be vectorised automatically, by compilers, or manually, by using intrinsics libraries provided by hardware vendors.

Back

ETH::2._Semester::PProg::Terminology

Code can be vectorised automatically, by compilers, or manually, by using intrinsics libraries provided by hardware vendors.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Code can be vectorised {{c1::automatically, by compilers}}, or {{c2::manually, by using intrinsics libraries}} provided by hardware vendors. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 54: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: g[MGo:#TN&

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Efficiency is heavily limited by the sequential part of a program.

Back

ETH::2._Semester::PProg::Terminology

Efficiency is heavily limited by the sequential part of a program.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Efficiency is heavily limited by {{c1::the sequential part of a program}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 55: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: glVh:^#cYn

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A statement is abstractly atomic if it appears atomic at a certain level of abstraction, but may take several steps at a lower level.

Back

ETH::2._Semester::PProg::Terminology

A statement is abstractly atomic if it appears atomic at a certain level of abstraction, but may take several steps at a lower level.

E.g. synchronized append(x) appears as one step to the caller, but not to the queue itself.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A statement is {{c1::abstractly atomic}} if it {{c2::appears atomic at a certain level of abstraction}}, but {{c3::may take several steps at a lower level}}. |

|

| Extra |

E.g. <code>synchronized append(x)</code> appears as one step to the caller, but not to the queue itself. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 56: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: h?Bu3]o^d>

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Cilk-style programming is a parallel programming idiom: To compute a program, execute code and spawn new tasks if required. Before returning, wait for all spawned tasks to complete.

Back

ETH::2._Semester::PProg::Terminology

Cilk-style programming is a parallel programming idiom: To compute a program, execute code and spawn new tasks if required. Before returning, wait for all spawned tasks to complete.

The system manages the eventual execution of the spawned tasks potentially in parallel.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Cilk-style programming}} is a parallel programming idiom: To compute a program, {{c2::execute code and spawn new tasks if required}}. Before returning, {{c3::wait for all spawned tasks to complete}}. |

|

| Extra |

The system manages the eventual execution of the spawned tasks potentially in parallel. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 57: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: h_KTBYg,v.

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A program has a data race if, during any possible execution, a memory location could be written from one thread, while concurrently being read or written from another thread.

Back

ETH::2._Semester::PProg::Terminology

A program has a data race if, during any possible execution, a memory location could be written from one thread, while concurrently being read or written from another thread.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A program has a {{c1::data race}} if, during any possible execution, a memory location could be {{c2::written from one thread}}, while concurrently being {{c3::read or written from another thread}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 58: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: hto57dfFuB

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

In comparison to threads, processes are more heavy-weight (since a whole program) and have encapsulated in memory.

Back

ETH::2._Semester::PProg::Terminology

In comparison to threads, processes are more heavy-weight (since a whole program) and have encapsulated in memory.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

In comparison to threads, processes are more {{c1::heavy-weight}} (since a whole program) and have {{c2::encapsulated in memory}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 59: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: hxi*,8Kfw7

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A thread is an independent (i.e. capable of running in parallel) unit of computation that executes a piece of code.

Back

ETH::2._Semester::PProg::Terminology

A thread is an independent (i.e. capable of running in parallel) unit of computation that executes a piece of code.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::thread}} is an {{c2::independent (i.e. capable of running in parallel) unit of computation}} that executes a piece of code. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 60: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: i&!tHL^UOI

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Exceptions, absence of deadlocks, and mutual exclusion are typical safety properties.

Back

ETH::2._Semester::PProg::Terminology

Exceptions, absence of deadlocks, and mutual exclusion are typical safety properties.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Exceptions, absence of deadlocks, and mutual exclusion}} are typical {{c2::safety properties}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 61: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: iKAC-UBBQ1

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Cache coherence protocols are hardware protocols that ensure consistency across caches, typically by tracking which locations are cached, and synchronising them if necessary.

Back

ETH::2._Semester::PProg::Terminology

Cache coherence protocols are hardware protocols that ensure consistency across caches, typically by tracking which locations are cached, and synchronising them if necessary.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Cache coherence protocols}} are hardware protocols that {{c2::ensure consistency across caches}}, typically by {{c3::tracking which locations are cached, and synchronising them if necessary}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 62: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: iU_BOo;(GS

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

The problem size of a sequential cutoff should be significantly larger than the scheduling overhead to maintain efficiency.

Back

ETH::2._Semester::PProg::Terminology

The problem size of a sequential cutoff should be significantly larger than the scheduling overhead to maintain efficiency.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

The problem size of a sequential cutoff should be {{c1::significantly larger than the scheduling overhead}} to maintain efficiency. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 63: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: j_M%FT*ye3

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction

Parallel programming refers to dividing a problem into smaller tasks, which are processed at the same time on different computing resources to make the solution faster and more efficient.

Back

ETH::2._Semester::PProg::01._Introduction

Parallel programming refers to dividing a problem into smaller tasks, which are processed at the same time on different computing resources to make the solution faster and more efficient.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Parallel programming}} refers to dividing a problem into smaller tasks, which are processed at the same time on different computing resources to make the solution faster and more efficient. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction

Note 64: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: jxx{VYsKxH

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Divide and conquer style parallelism (also called recursive splitting) means: solve a problem by recursively solving smaller sub-problems and combining their results. Solve the sub-problems in separate threads to gain a speedup.

Back

ETH::2._Semester::PProg::Terminology

Divide and conquer style parallelism (also called recursive splitting) means: solve a problem by recursively solving smaller sub-problems and combining their results. Solve the sub-problems in separate threads to gain a speedup.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Divide and conquer style parallelism}} (also called {{c2::recursive splitting}}) means: solve a problem by {{c3::recursively solving smaller sub-problems and combining their results}}. Solve the sub-problems in {{c4::separate threads}} to gain a speedup. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 65: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: j}G/eI.jy%

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A context switch typically refers to switching between processes, but can also refer to switching between threads.

Back

ETH::2._Semester::PProg::Terminology

A context switch typically refers to switching between processes, but can also refer to switching between threads.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A context switch typically refers to {{c1::switching between processes}}, but can also refer {{c1::to switching between threads}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 66: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: k+S4n^4+Tx

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

What's the problem with alternation?

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

What's the problem with alternation?

Starvation!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

What's the problem with alternation?<br><br><img src="paste-ad8f32e8d8a4867f60aff4aeab512e322c67fe76.jpg"> |

|

| Back |

Starvation!<br><br><img src="paste-ee8a99e1b3f8ca1b947a3f128452c2831a7ce5ef.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 67: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: k.f)rxiy8y

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Threads of the same process live in the same memory space and can communicate with each other.

Back

ETH::2._Semester::PProg::Terminology

Threads of the same process live in the same memory space and can communicate with each other.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Threads of the same process {{c1::live in the same memory space and can communicate with each other}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 68: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: kaCb;PSfJ_

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A safety property is a property of a system: "nothing bad ever happens". Can be violated in finite time.

Back

ETH::2._Semester::PProg::Terminology

A safety property is a property of a system: "nothing bad ever happens". Can be violated in finite time.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::safety property}} is a property of a system: {{c2::"nothing bad ever happens"}}. Can be violated in {{c3::finite time}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 69: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: lL_$?<@pF+

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

S_p means speedup with p processors

Back

ETH::2._Semester::PProg::Terminology

S_p means speedup with p processors

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::S_p}} means {{c2::speedup}} with p processors |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 70: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: l{B3CynHyc

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Process context is all state associated with a process

Back

ETH::2._Semester::PProg::Terminology

Process context is all state associated with a process

this includes CPU state (registers, program counter), program state (stack, heap, resource handles) and additional management information

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Process context}} is all state associated with a process |

|

| Extra |

this includes CPU state (registers, program counter), program state (stack, heap, resource handles) and additional management information |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 71: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: m$HzW2de@M

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

CISC stands for Complex Instruction Set Computer. A fundamental CPU architecture model with complex, feature-rich instructions.

Back

ETH::2._Semester::PProg::Terminology

CISC stands for Complex Instruction Set Computer. A fundamental CPU architecture model with complex, feature-rich instructions.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::CISC}} stands for {{c2::Complex Instruction Set Computer}}. A fundamental CPU architecture model with {{c3::complex, feature-rich instructions}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 72: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: m7?!UWmaST

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Throughput is an evaluation metric for pipelines that measures the amount of workthat can be done by a pipeline in a given period of time.

Back

ETH::2._Semester::PProg::Terminology

Throughput is an evaluation metric for pipelines that measures the amount of workthat can be done by a pipeline in a given period of time.

e.g. CPU instructions

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Throughput}} is an evaluation metric for pipelines that measures {{c2::the amount of work}}that can be done by a pipeline in a {{c3::given period of time}}. |

|

| Extra |

e.g. CPU instructions |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 73: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: mhRUoqJ}u<

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Speedup is an absolute value.

Back

ETH::2._Semester::PProg::Terminology

Speedup is an absolute value.

e.g. the amount of time saved

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Speedup is an {{c1::absolute value}}. |

|

| Extra |

e.g. the amount of time saved |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 74: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: n*pnVv+nib

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Access to shared resources needs synchronization.

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Access to shared resources needs synchronization.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Access to shared resources needs {{c1::synchronization}}. |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 75: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: nYv?sVRA/U

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Concurrency means dealing with multiple things at the same time. Involves managing shared resources and their interactions.

Back

ETH::2._Semester::PProg::Terminology

Concurrency means dealing with multiple things at the same time. Involves managing shared resources and their interactions.

Often used interchangeably with parallelism.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Concurrency}} means {{c2::dealing with multiple things at the same time}}. Involves {{c3::managing shared resources and their interactions}}. |

|

| Extra |

Often used interchangeably with parallelism. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 76: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: o?%~4t?Q]?

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Span is the critical path (height) of the task graph. It corresponds to T_∞.

Back

ETH::2._Semester::PProg::Terminology

Span is the critical path (height) of the task graph. It corresponds to T_∞.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Span}} is the {{c2::critical path (height)}} of the task graph. It corresponds to {{c3::T_∞}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 77: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: oG)e}QN;XP

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A program has a race condition if, during any possible execution with the same inputs, its observable behaviour (results, output, ...) may change if .

Back

ETH::2._Semester::PProg::Terminology

A program has a race condition if, during any possible execution with the same inputs, its observable behaviour (results, output, ...) may change if .

events: e.g. scheduler interactions causing different interleavings, changing network latency

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A program has a {{c1::race condition}} if, during any possible execution with the same inputs, its observable behaviour (results, output, ...) may change if {{2::events happen in different order}}. |

|

| Extra |

events: e.g. scheduler interactions causing different interleavings, changing network latency |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 78: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: oc}?&fK}O8

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

RISC stands for Reduced Instruction Set Computer. A fundamental CPU architecture model. Classical RISC is simpler: RISC instructions can only work on registers, and reading/writing memory are separate instructions.

Back

ETH::2._Semester::PProg::Terminology

RISC stands for Reduced Instruction Set Computer. A fundamental CPU architecture model. Classical RISC is simpler: RISC instructions can only work on registers, and reading/writing memory are separate instructions.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::RISC}} stands for {{c2::Reduced Instruction Set Computer}}. A fundamental CPU architecture model. Classical RISC is simpler: RISC instructions can only work on {{c3::registers}}, and reading/writing memory are {{c4::separate instructions}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 79: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: orPo?8l!zc

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

A task graph is a graph (DAG) created by drawing nodes (tasks) and edges (spawns, joins).

Back

ETH::2._Semester::PProg::Terminology

A task graph is a graph (DAG) created by drawing nodes (tasks) and edges (spawns, joins).

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

A {{c1::task graph}} is a graph ({{c2::DAG}}) created by drawing {{c3::nodes (tasks)}} and {{c4::edges (spawns, joins)}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 80: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: o~aP685V>p

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Depending on the size of the context, a context switch might be computationally expensive.

Back

ETH::2._Semester::PProg::Terminology

Depending on the size of the context, a context switch might be computationally expensive.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

Depending on the size of the context, a context switch might be {{c1::computationally expensive}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 81: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: pO7Qm0Zc[}

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Busy waiting occurs when a thread busily (actively) waits, e.g. by spinning in a loop, for a condition to become true.

Back

ETH::2._Semester::PProg::Terminology

Busy waiting occurs when a thread busily (actively) waits, e.g. by spinning in a loop, for a condition to become true.

In the opposite scenario, the thread sleeps (i.e. is blocked) until the condition becomes true.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Busy waiting}} occurs when a thread {{c2::busily (actively) waits}}, e.g. by spinning in a loop, for a condition to become true. |

|

| Extra |

In the opposite scenario, the thread sleeps (i.e. is blocked) until the condition becomes true. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 82: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID: q#;#HVzA)B

deleted

Deleted Note

Front

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Why does checking flags twice not work in the notification system?

Back

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Why does checking flags twice not work in the notification system?

We could reach a deadlock!

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Front |

Why does checking flags twice not work in the notification system?<br><br><img src="paste-11ce087816d909219d34a0506969277618d007da.jpg"> |

|

| Back |

We could reach a deadlock!<br><br><img src="paste-1efbb84fb9f56ed0de39e234d255f35cc07e9cf8.jpg"> |

|

Tags:

ETH::2._Semester::PProg::01._Introduction::1._Mutual_Exclusion

Note 83: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: q5sPBXAN&z

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Instruction level parallelism (ILP) is CPU-internal parallelisation of independent instructions, with the goal of improving performance by increasing utilisation of a CPU's functional units.

Back

ETH::2._Semester::PProg::Terminology

Instruction level parallelism (ILP) is CPU-internal parallelisation of independent instructions, with the goal of improving performance by increasing utilisation of a CPU's functional units.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Instruction level parallelism (ILP)}} is {{c2::CPU-internal parallelisation}} of independent instructions, with the goal of improving performance by {{c3::increasing utilisation of a CPU's functional units}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 84: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: qEgTjb0lpA

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

The sequential part is the part of a given program that can't be executed in parallel. It limits the maximum speedup.

Back

ETH::2._Semester::PProg::Terminology

The sequential part is the part of a given program that can't be executed in parallel. It limits the maximum speedup.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

The {{c1::sequential part}} is the part of a given program that {{c2::can't be executed in parallel}}. It limits the {{c3::maximum speedup}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 85: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: qMbl>8{=0E

deleted

Deleted Note

Front

ETH::2._Semester::PProg::Terminology

Data race is often used interchangeably with race condition.

Back

ETH::2._Semester::PProg::Terminology

Data race is often used interchangeably with race condition.

Current

Note has been deleted

Field-by-field Comparison

| Field |

Before |

After |

| Text |

{{c1::Data race}} is often used interchangeably with {{c2::race condition}}. |

|

Tags:

ETH::2._Semester::PProg::Terminology

Note 86: ETH::2. Semester::PProg

Deck: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID: qWB`2aaYqS

deleted

Deleted Note

Front