Runtime of Johnson's Algorithm?

Note 1: ETH::A&D

Deck: ETH::A&D

Note Type: Algorithms

GUID:

modified

Note Type: Algorithms

GUID:

DeJ!2ph{Al

Before

Front

Back

Runtime of Johnson's Algorithm?

\(O(|V| \cdot (|V| + |E|) \log |V|)\) (running dijkstra's n times, but allows negatives)

After

Front

Runtime of Johnson's Algorithm?

Back

Runtime of Johnson's Algorithm?

\(O(|V| \cdot (|V| + |E|) \log |V|)\) (running dijkstra's n times, but allows negatives)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

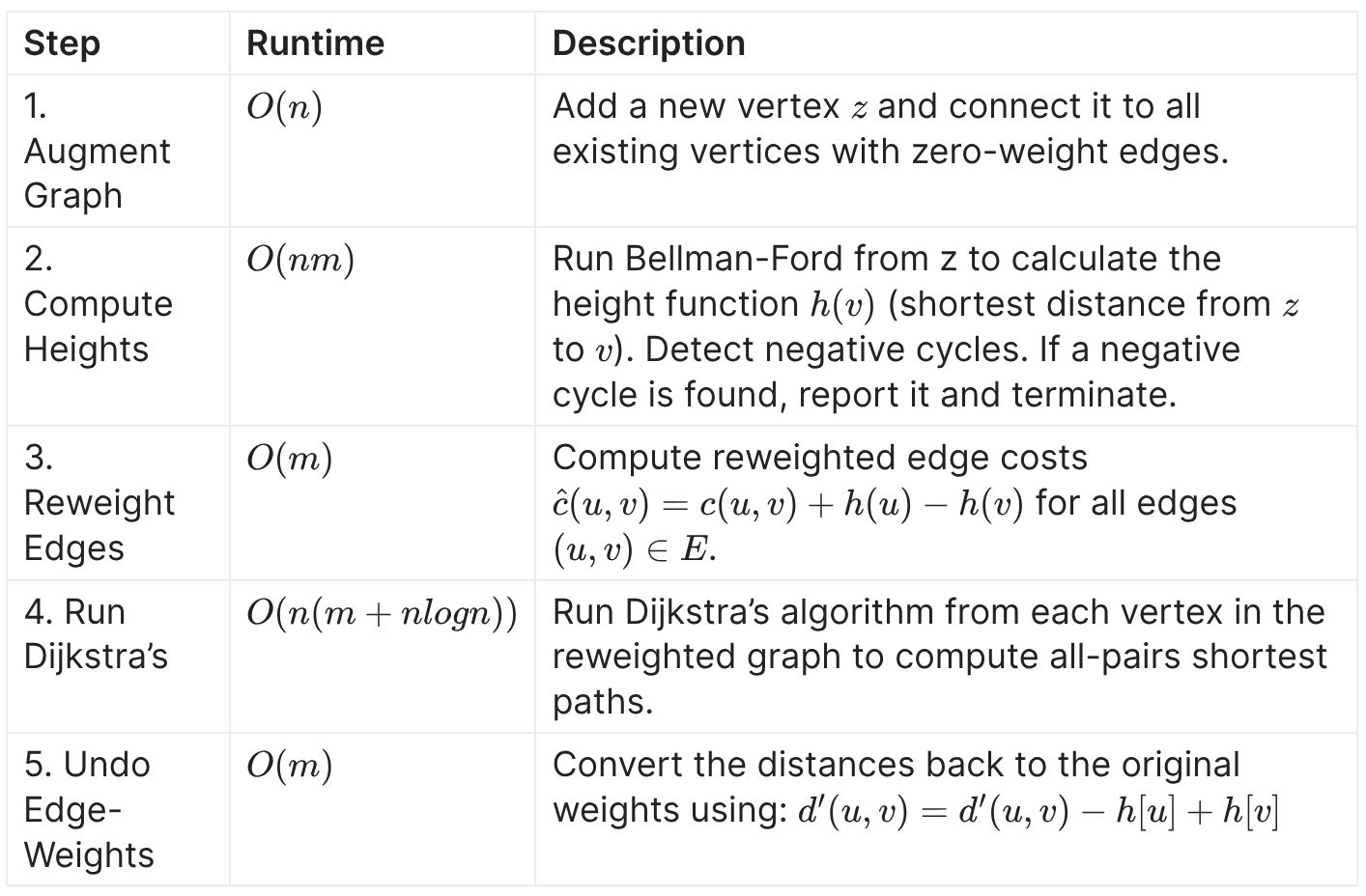

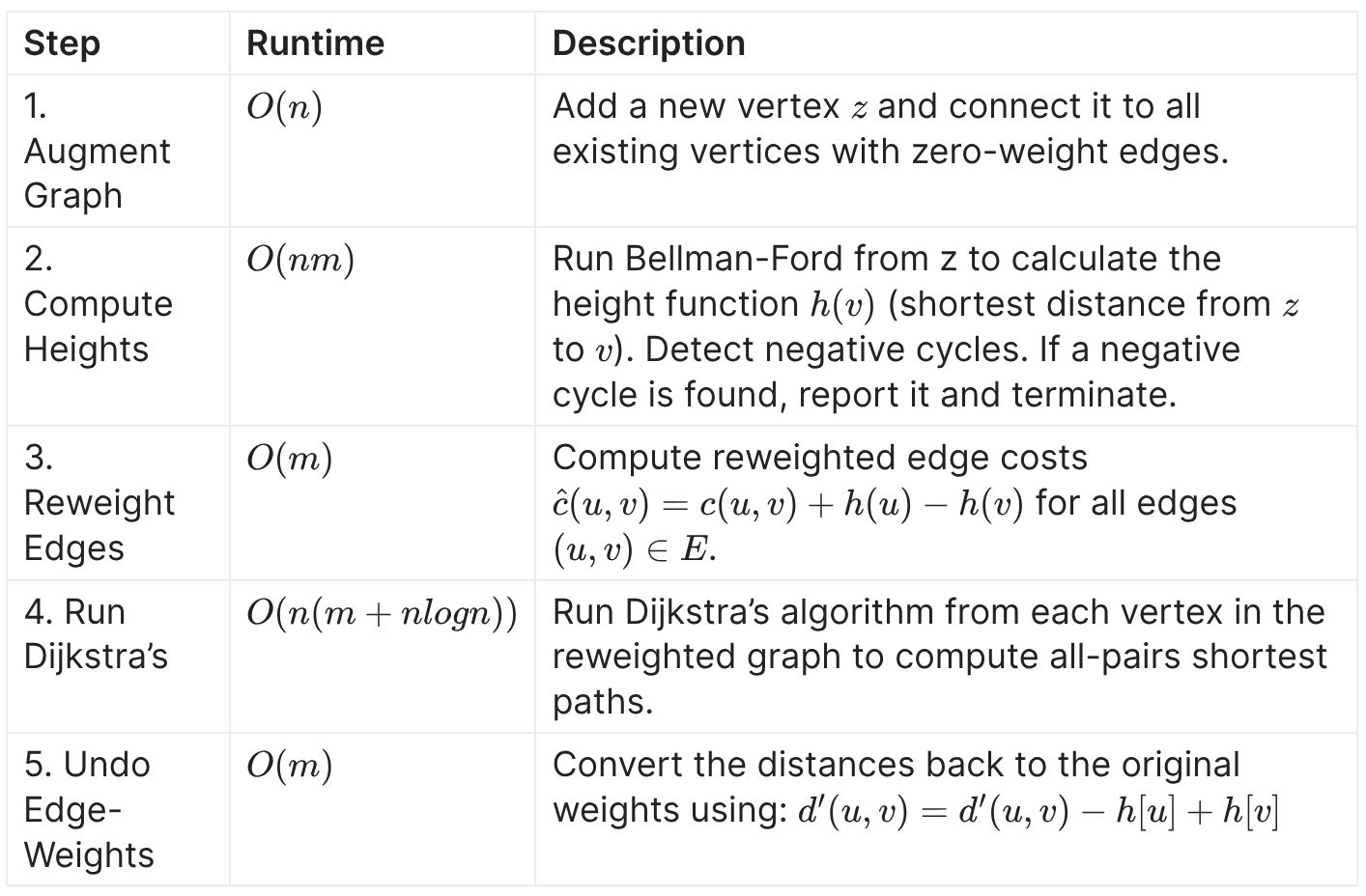

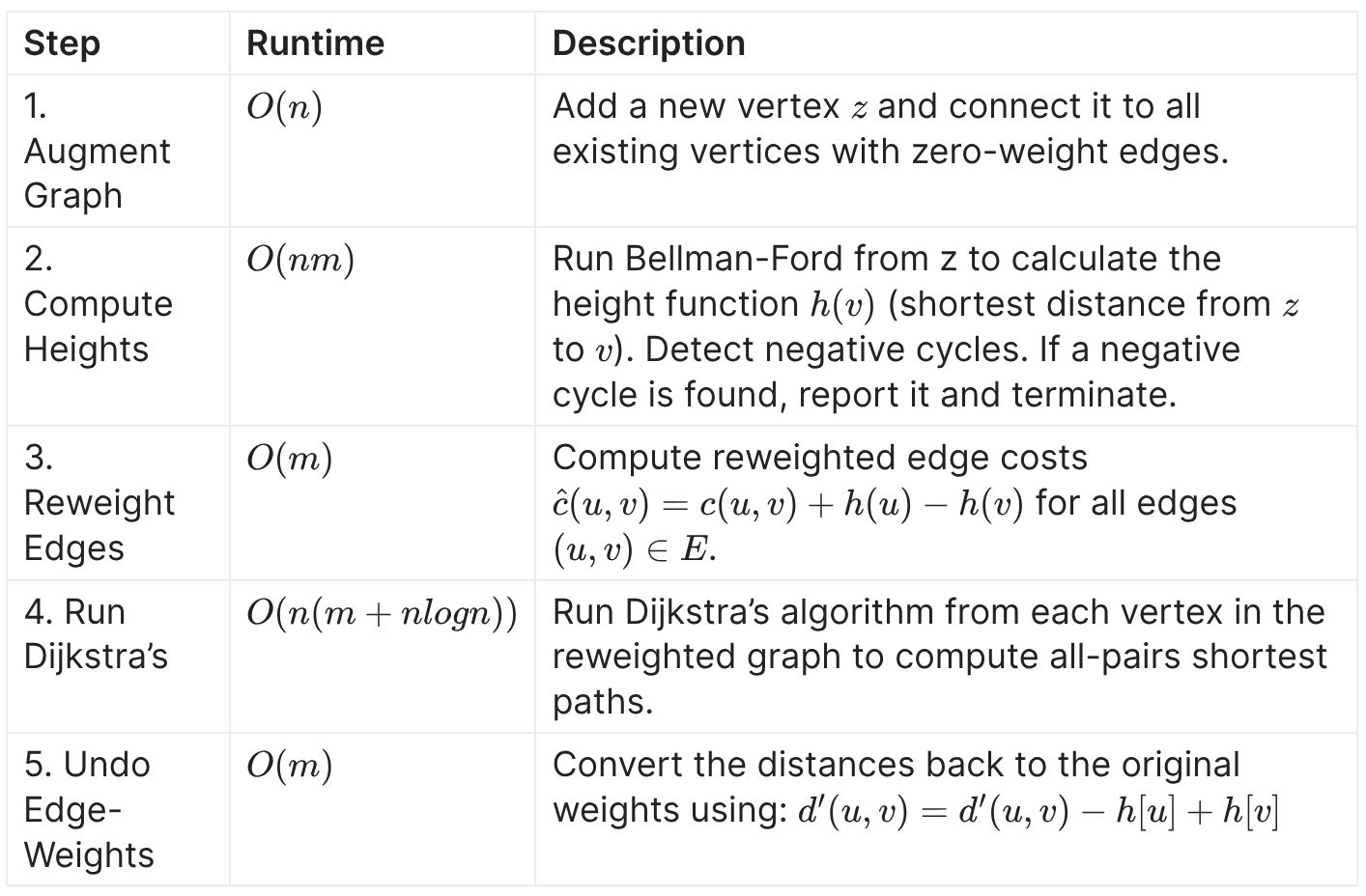

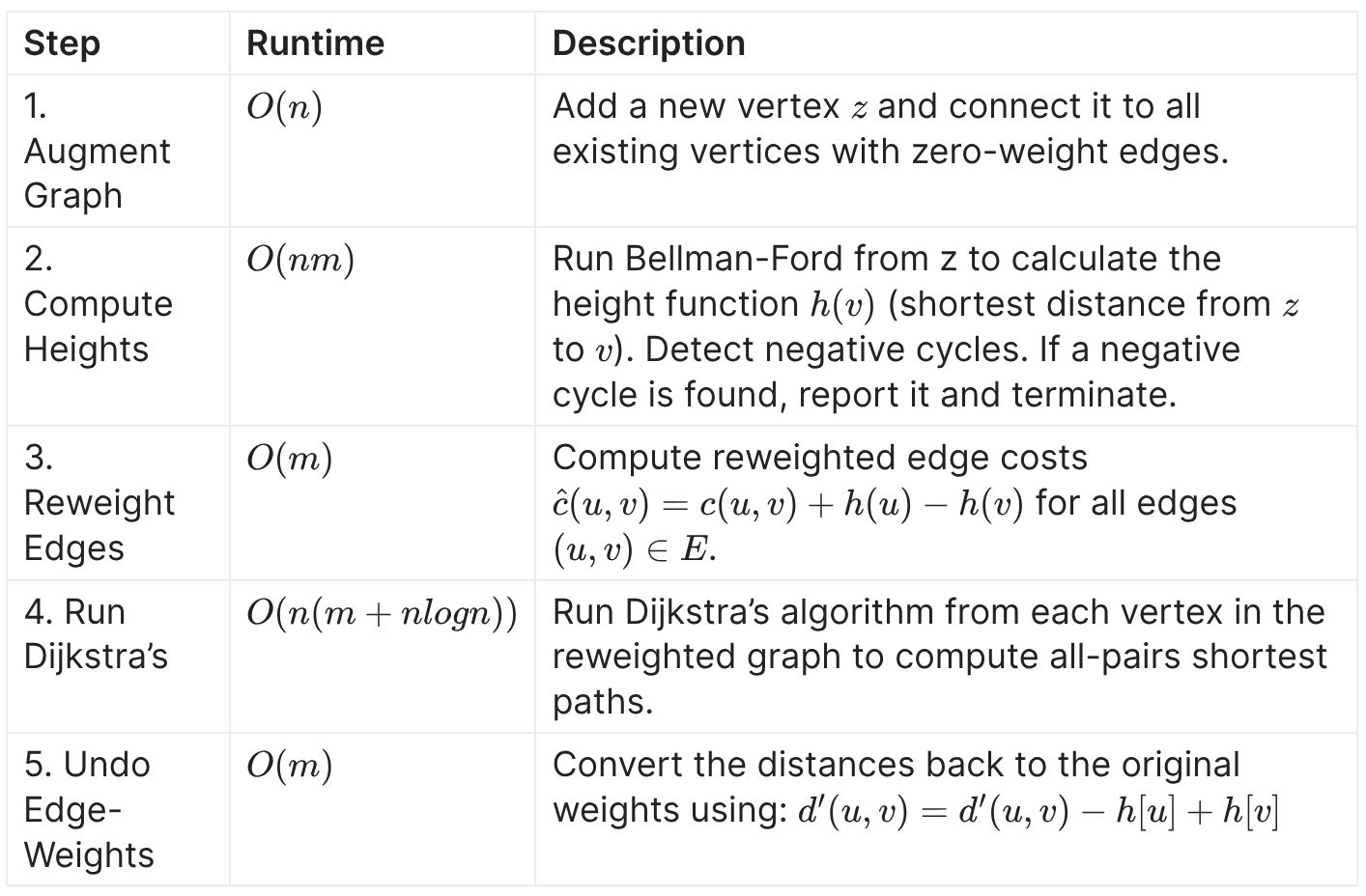

| Approach | <ol><li><b>Add a New Vertex:</b><ul><li>Add a new vertex s to the graph and connect it to all vertices with zero-weight edges. </li>

</ul></li><li><b>Run Bellman-Ford</b>:<ul><li>Use the Bellman-Ford algorithm starting from s to compute the shortest distance h[v] from s to each vertex v.</li><li>If a negative-weight cycle is detected, stop.</li></ul></li><li><b>Reweight Edges</b>: <ul><li>For each edge u → v with weight w(u, v), reweight it as: w′(u, v) = w(u, v) + h[u] − h[v]</li><li>This ensures all edge weights are non-negative.</li>

</ul> </li><li><b>Run Dijkstra’s Algorithm:</b><ul><li>For each vertex v, use Dijkstra’s algorithm to compute the shortest paths to all other vertices.</li>

</ul></li><li><b>Adjust Back</b>:<ul><li>Convert the distances back to the original weights using: d′(u, v) = d′(u, v) − h[u] + h[v]</li>

</ul></li><li><b>End:</b></li><ul><li>The resulting shortest path distances between all pairs of vertices are valid.</li></ul></ol><div>The overall higher cost allows us to run pre-computation steps like B |

<ol><li><b>Add a New Vertex:</b><ul><li>Add a new vertex s to the graph and connect it to all vertices with zero-weight edges. </li> </ul></li><li><b>Run Bellman-Ford</b>:<ul><li>Use the Bellman-Ford algorithm starting from s to compute the shortest distance h[v] from s to each vertex v.</li><li>If a negative-weight cycle is detected, stop.</li></ul></li><li><b>Reweight Edges</b>: <ul><li>For each edge u → v with weight w(u, v), reweight it as: w′(u, v) = w(u, v) + h[u] − h[v]</li><li>This ensures all edge weights are non-negative.</li> </ul> </li><li><b>Run Dijkstra’s Algorithm:</b><ul><li>For each vertex v, use Dijkstra’s algorithm to compute the shortest paths to all other vertices.</li> </ul></li><li><b>Adjust Back</b>:<ul><li>Convert the distances back to the original weights using: d′(u, v) = d′(u, v) − h[u] + h[v]</li> </ul></li><li><b>End:</b></li><ul><li>The resulting shortest path distances between all pairs of vertices are valid.</li></ul></ol><div>The overall higher cost allows us to run pre-computation steps like Bellman-Ford for "free".</div> |

Note 2: ETH::A&D

Deck: ETH::A&D

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

hsaD+h{~n8

Before

Front

Reweighting in Johnson's algorithm:

- We add a vertex \(s\) and add a 0 cost edge from it to all vertices.

- We then run B-F which determines the height of each vertex by the d[v] from start vertex \(s\)

Back

Reweighting in Johnson's algorithm:

- We add a vertex \(s\) and add a 0 cost edge from it to all vertices.

- We then run B-F which determines the height of each vertex by the d[v] from start vertex \(s\)

After

Front

Reweighting in Johnson's algorithm:

- We add a vertex \(s\) and add a 0 cost edge from it to all vertices.

- We then run Bellman-Ford which determines the height of each vertex by the d[v] from start vertex \(s\)

Back

Reweighting in Johnson's algorithm:

- We add a vertex \(s\) and add a 0 cost edge from it to all vertices.

- We then run Bellman-Ford which determines the height of each vertex by the d[v] from start vertex \(s\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Reweighting in Johnson's algorithm:<br><ol><li>We {{c1::add a vertex \(s\)}} and {{c1::add a 0 cost edge from it to all vertices}}.</li><li>We then {{c2::run B |

Reweighting in Johnson's algorithm:<br><ol><li>We {{c1::add a vertex \(s\)}} and {{c1::add a 0 cost edge from it to all vertices}}.</li><li>We then {{c2::run Bellman-Ford which determines the height of each vertex by the d[v] from start vertex \(s\)}} </li></ol> |

Note 3: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

G9![k&wZRU

Before

Front

Given a symmetric matrix \(A \in \mathbb{R}^{n \times n}\) the Rayleigh Quotient defined for \(x \in \mathbb{R}^n \setminus {0}\), as \[ R(x) = {{c1::\frac{x^\top Ax}{x^\top x} }}\]attains it’s

- maximum at \(R(v_{\text{max) = \lambda_{\text{max}}\)}}

- minimum at \(R(v_{\text{min) = \lambda_{\text{min}}\)}}

where \(\lambda_{\text{max\) and \(\lambda_{\text{min}}\) are respectively the largest and smallest eigenvalues of \(A\) and \(v_{\text{max}}\) and \(v_{\text{min}}\) their associated eigenvectors}}.

Back

Given a symmetric matrix \(A \in \mathbb{R}^{n \times n}\) the Rayleigh Quotient defined for \(x \in \mathbb{R}^n \setminus {0}\), as \[ R(x) = {{c1::\frac{x^\top Ax}{x^\top x} }}\]attains it’s

- maximum at \(R(v_{\text{max) = \lambda_{\text{max}}\)}}

- minimum at \(R(v_{\text{min) = \lambda_{\text{min}}\)}}

where \(\lambda_{\text{max\) and \(\lambda_{\text{min}}\) are respectively the largest and smallest eigenvalues of \(A\) and \(v_{\text{max}}\) and \(v_{\text{min}}\) their associated eigenvectors}}.

After

Front

Given a symmetric matrix \(A \in \mathbb{R}^{n \times n}\) the Rayleigh Quotient defined for \(x \in \mathbb{R}^n \setminus {0}\), as \[ R(x) = {{c1::\frac{x^\top Ax}{x^\top x} }}\]attains it’s

- maximum at {{c2::\(R(v_{\text{max} }) = \lambda_{\text{max} }\)}}

- minimum at {{c2::\(R(v_{\text{min} }) = \lambda_{\text{min} } \)}}

where {{c2::\(\lambda_{\text{max} }\) and \(\lambda_{\text{min} }\) are respectively the largest and smallest eigenvalues of \(A\) and \(v_{\text{max} }\) and \(v_{\text{min} }\) their associated eigenvectors}}.

Back

Given a symmetric matrix \(A \in \mathbb{R}^{n \times n}\) the Rayleigh Quotient defined for \(x \in \mathbb{R}^n \setminus {0}\), as \[ R(x) = {{c1::\frac{x^\top Ax}{x^\top x} }}\]attains it’s

- maximum at {{c2::\(R(v_{\text{max} }) = \lambda_{\text{max} }\)}}

- minimum at {{c2::\(R(v_{\text{min} }) = \lambda_{\text{min} } \)}}

where {{c2::\(\lambda_{\text{max} }\) and \(\lambda_{\text{min} }\) are respectively the largest and smallest eigenvalues of \(A\) and \(v_{\text{max} }\) and \(v_{\text{min} }\) their associated eigenvectors}}.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <div>Given a symmetric matrix \(A \in \mathbb{R}^{n \times n}\) the Rayleigh Quotient defined for \(x \in \mathbb{R}^n \setminus {0}\), as \[ R(x) = {{c1::\frac{x^\top Ax}{x^\top x} }}\]attains it’s</div><div><ul><li> |

<div>Given a symmetric matrix \(A \in \mathbb{R}^{n \times n}\) the Rayleigh Quotient defined for \(x \in \mathbb{R}^n \setminus {0}\), as \[ R(x) = {{c1::\frac{x^\top Ax}{x^\top x} }}\]attains it’s</div><div><ul><li>maximum at {{c2::\(R(v_{\text{max} }) = \lambda_{\text{max} }\)}}</li><li>minimum at {{c2::\(R(v_{\text{min} }) = \lambda_{\text{min} } \)}}</li></ul><div>where {{c2::\(\lambda_{\text{max} }\) and \(\lambda_{\text{min} }\) are respectively the largest and smallest eigenvalues of \(A\) and \(v_{\text{max} }\) and \(v_{\text{min} }\) their associated eigenvectors}}.</div></div><blockquote><ul> </ul></blockquote> |

Note 4: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

M;:>m2mzC#

Before

Front

Let \(A \in \mathbb{R}^{m \times n}\). Then \(N(A) = N(A^\top A)\). Proof Included

Back

Let \(A \in \mathbb{R}^{m \times n}\). Then \(N(A) = N(A^\top A)\). Proof Included

\(N(A) = N(A^\top A)\) holds because:

- if \(x \in N(A)\) then \(Ax = 0 \implies A^\top Ax = A \cdot 0 \implies A^\top A x = 0\).

- if \(x \in N(A^\top A)\) then \(A^\top A x = 0\), which means \[ 0 = x^\top 0 = x^\top A^\top Ax = (Ax)^\top(Ax) = ||Ax||^2 \implies Ax = 0 \]

After

Front

Let \(A \in \mathbb{R}^{m \times n}\). Then \(N(A) = {{c1::N(A^\top A)::\text{another nullspace} }}\). Proof Included

Back

Let \(A \in \mathbb{R}^{m \times n}\). Then \(N(A) = {{c1::N(A^\top A)::\text{another nullspace} }}\). Proof Included

\(N(A) = N(A^\top A)\) holds because:

- if \(x \in N(A)\) then \(Ax = 0 \implies A^\top Ax = A \cdot 0 \implies A^\top A x = 0\).

- if \(x \in N(A^\top A)\) then \(A^\top A x = 0\), which means \[ 0 = x^\top 0 = x^\top A^\top Ax = (Ax)^\top(Ax) = ||Ax||^2 \implies Ax = 0 \]

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Let \(A \in \mathbb{R}^{m \times n}\). Then \(N(A) = {{c1::N(A^\top A)}}\). <i>Proof Included</i> | Let \(A \in \mathbb{R}^{m \times n}\). Then \(N(A) = {{c1::N(A^\top A)::\text{another nullspace} }}\). <i>Proof Included</i> |

Note 5: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

OYJ^1jnB1-

Before

Front

Real antisymmetric matrices always have imaginary (or zero) eigenvalues.

Back

Real antisymmetric matrices always have imaginary (or zero) eigenvalues.

After

Front

Real antisymmetric matrices always have imaginary (or zero) eigenvalues.

Back

Real antisymmetric matrices always have imaginary (or zero) eigenvalues.

Antisymmetric means \(A^T=-A\).

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Extra | Antisymmetric means \(A^T=-A\). |

Note 6: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

Pa)fnn7&WJ

Before

Front

\(A \in \mathbb{R}^{n \times n}\) arbitrary non-symmetric has rank \(n - \dim(N(A))\) so it's \(n\) minus the geometric multiplicity of \(\lambda = 0\) .

Back

\(A \in \mathbb{R}^{n \times n}\) arbitrary non-symmetric has rank \(n - \dim(N(A))\) so it's \(n\) minus the geometric multiplicity of \(\lambda = 0\) .

After

Front

\(A \in \mathbb{R}^{n \times n}\) arbitrary non-symmetric has rank \(n - \dim(N(A))\) so it's \(n\) minus the geometric multiplicity of \(\lambda = 0\) .

Back

\(A \in \mathbb{R}^{n \times n}\) arbitrary non-symmetric has rank \(n - \dim(N(A))\) so it's \(n\) minus the geometric multiplicity of \(\lambda = 0\) .

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | \(A \in \mathbb{R}^{n \times n}\) arbitrary non-symmetric has rank {{c1:: |

\(A \in \mathbb{R}^{n \times n}\) arbitrary non-symmetric has rank {{c1:: \(n - \dim(N(A))\) so it's \(n\) minus the geometric multiplicity of \(\lambda = 0\) ::in terms of multiplicities}}. |

Note 7: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

d9#?3c)V#_

Before

Front

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda \in \mathbb{C}\) be an eigenvalue of \(A\), then {{c1::\(\lambda \in \mathbb{R}\):: property of the EW}}. Proof Included

Back

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda \in \mathbb{C}\) be an eigenvalue of \(A\), then {{c1::\(\lambda \in \mathbb{R}\):: property of the EW}}. Proof Included

Proof \(v \in \mathbb{C}^n\) be EV of \(\lambda\). Thus we have \(Av = \lambda v\). Since \(A\) is symmetric we have \(A^ = A\). \[\begin{align} \overline{\lambda}||v||^2 &= \overline{\lambda} v^*v \\ &= (\lambda v)^*v \\ &= (Av)^*v = v^*A^*v \\ &= v^* Av \text{ (uses } A^* = A \text{) } \\ &= v^*\lambda v \\ &= \lambda ||v||^2 \end{align}\]Since \(v \neq 0\), then \(||v|| \neq 0\) and so \(\lambda = \overline{\lambda}\) thus \(\lambda \in \mathbb{R}\).

After

Front

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda \in \mathbb{C}\) be an eigenvalue of \(A\), then {{c1::\(\lambda \in \mathbb{R}\)::property of the EW}}. Proof Included

Back

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda \in \mathbb{C}\) be an eigenvalue of \(A\), then {{c1::\(\lambda \in \mathbb{R}\)::property of the EW}}. Proof Included

Proof \(v \in \mathbb{C}^n\) be EV of \(\lambda\). Thus we have \(Av = \lambda v\). Since \(A\) is symmetric we have \(A^ = A\). \[\begin{align} \overline{\lambda}||v||^2 &= \overline{\lambda} v^*v \\ &= (\lambda v)^*v \\ &= (Av)^*v = v^*A^*v \\ &= v^* Av \text{ (uses } A^* = A \text{) } \\ &= v^*\lambda v \\ &= \lambda ||v||^2 \end{align}\]Since \(v \neq 0\), then \(||v|| \neq 0\) and so \(\lambda = \overline{\lambda}\) thus \(\lambda \in \mathbb{R}\).

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda \in \mathbb{C}\) be an eigenvalue of \(A\), then {{c1::\(\lambda \in \mathbb{R}\):: |

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda \in \mathbb{C}\) be an eigenvalue of \(A\), then {{c1::\(\lambda \in \mathbb{R}\)::property of the EW}}. <i>Proof Included</i> |

Note 8: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

dZ)aTr>2eb

Before

Front

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\). Proof Included

Back

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\). Proof Included

Shared EWs: For \((A\top A)v_k = \lambda_k v_k\) we get \(AA^\top A v_k = \lambda_k Av_k\) and thus \(Av_k\) EV and \(\lambda_k\) is an EW of \(AA^\top\).

Orthogonality: For \(j \neq k\) we have \((Av_j)^\top (Av_k) = v_j^\top A^\top Av_k = v_j^\top \lambda_k v_k = \lambda_k v_j^\top v_k = 0\)

Orthogonality: For \(j \neq k\) we have \((Av_j)^\top (Av_k) = v_j^\top A^\top Av_k = v_j^\top \lambda_k v_k = \lambda_k v_j^\top v_k = 0\)

After

Front

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\). Proof Included

Back

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\). Proof Included

Shared EWs: For \((A^\top A)v_k = \lambda_k v_k\) we get \(AA^\top A v_k = \lambda_k Av_k\) and thus \(Av_k\) EV and \(\lambda_k\) is an EW of \(AA^\top\).

Orthogonality: For \(j \neq k\) we have \((Av_j)^\top (Av_k) = v_j^\top A^\top Av_k = v_j^\top \lambda_k v_k = \lambda_k v_j^\top v_k = 0\)

Orthogonality: For \(j \neq k\) we have \((Av_j)^\top (Av_k) = v_j^\top A^\top Av_k = v_j^\top \lambda_k v_k = \lambda_k v_j^\top v_k = 0\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Extra | <b>Shared EWs:</b> For \((A\top A)v_k = \lambda_k v_k\) we get \(AA^\top A v_k = \lambda_k Av_k\) and thus \(Av_k\) EV and \(\lambda_k\) is an EW of \(AA^\top\).<br><br><b>Orthogonality:</b> For \(j \neq k\) we have \((Av_j)^\top (Av_k) = v_j^\top A^\top Av_k = v_j^\top \lambda_k v_k = \lambda_k v_j^\top v_k = 0\)<div></div><div></div> | <b>Shared EWs:</b> For \((A^\top A)v_k = \lambda_k v_k\) we get \(AA^\top A v_k = \lambda_k Av_k\) and thus \(Av_k\) EV and \(\lambda_k\) is an EW of \(AA^\top\).<br><br><b>Orthogonality:</b> For \(j \neq k\) we have \((Av_j)^\top (Av_k) = v_j^\top A^\top Av_k = v_j^\top \lambda_k v_k = \lambda_k v_j^\top v_k = 0\)<div></div><div></div> |

Note 9: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

fILs=r`j+*

Before

Front

Spectral Theorem: Any symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has \(n\) real eigenvalues and {{c1::an orthonormal basis of \(\mathbb{R}^{n \times n}\) consisting of it's eigenvectors :: EV}}.

Back

Spectral Theorem: Any symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has \(n\) real eigenvalues and {{c1::an orthonormal basis of \(\mathbb{R}^{n \times n}\) consisting of it's eigenvectors :: EV}}.

After

Front

Spectral Theorem: Any symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has \(n\) real eigenvalues and {{c1::an orthonormal basis of \(\mathbb{R}^{n \times n}\) consisting of it's eigenvectors::EV}}.

Back

Spectral Theorem: Any symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has \(n\) real eigenvalues and {{c1::an orthonormal basis of \(\mathbb{R}^{n \times n}\) consisting of it's eigenvectors::EV}}.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <b>Spectral Theorem: </b>Any symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has {{c1::\(n\) real eigenvalues |

<b>Spectral Theorem: </b>Any symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has {{c1::\(n\) real eigenvalues::EW}} and {{c1::an orthonormal basis of \(\mathbb{R}^{n \times n}\) consisting of it's eigenvectors::EV}}. |

Note 10: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

jn6

Before

Front

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda_1 \neq \lambda_2 \in \mathbb{R}\) be two distinct eigenvalues of \(A\) with corresponding eigenvectors \(v_1, v_2\): \(v_1\) and \(v_2\) are orthogonal. Proof Included

Back

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda_1 \neq \lambda_2 \in \mathbb{R}\) be two distinct eigenvalues of \(A\) with corresponding eigenvectors \(v_1, v_2\): \(v_1\) and \(v_2\) are orthogonal. Proof Included

Proof \(\lambda_1 v_1 ^\top v_2 = (Av_1)^\top v_2 = v_1^\top A ^\top v_2 = v_1^\top (Av_2) = \lambda_2 v_1^\top v_2\) Thus \(v_1^\top v_2\) must be \(0\).

After

Front

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda_1,\lambda_2 \in \mathbb{R}\) be two distinct eigenvalues of \(A\) with corresponding eigenvectors \(v_1, v_2\):

\(v_1\) and \(v_2\) are orthogonal. Proof Included

Back

Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda_1,\lambda_2 \in \mathbb{R}\) be two distinct eigenvalues of \(A\) with corresponding eigenvectors \(v_1, v_2\):

\(v_1\) and \(v_2\) are orthogonal. Proof Included

Proof \(\lambda_1 v_1 ^\top v_2 = (Av_1)^\top v_2 = v_1^\top A ^\top v_2 = v_1^\top (Av_2) = \lambda_2 v_1^\top v_2\) Thus \(v_1^\top v_2\) must be \(0\).

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <div>Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda_1 |

<div>Let \(A \in \mathbb{R}^{n \times n}\) be a symmetric matrix and \(\lambda_1,\lambda_2 \in \mathbb{R}\) be two {{c2::distinct}} eigenvalues of \(A\) with corresponding eigenvectors \(v_1, v_2\):</div><div><br></div><div>\(v_1\) and \(v_2\) are {{c1::orthogonal:: property}}. <i>Proof Included</i></div> |

Note 11: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

jo;...VEEI

Before

Front

A matrix has a complete set of real eigenvectors if all its eigenvalues are real and the geometric multiplicities are the same as the algebraic multiplicities of all it's eigenvalues .

Back

A matrix has a complete set of real eigenvectors if all its eigenvalues are real and the geometric multiplicities are the same as the algebraic multiplicities of all it's eigenvalues .

Example \(I\) has eigenvalue \(1\) with geometric multiplicity \(n\) (\(\dim(N(I - 1 \cdot I)) = n\)) and algebraic multiplicity \(n\) (As the characteristic polynomial of \(I\), \(P(z) = (z - 1)(z - 1) \dots (z - 1)\) with that repeated \(n\) times).

After

Front

A matrix has a complete set of real eigenvectors if all its eigenvalues are real and the geometric multiplicities are the same as the algebraic multiplicities of all it's eigenvalues.

Back

A matrix has a complete set of real eigenvectors if all its eigenvalues are real and the geometric multiplicities are the same as the algebraic multiplicities of all it's eigenvalues.

Example \(I\) has eigenvalue \(1\) with geometric multiplicity \(n\) (\(\dim(N(I - 1 \cdot I)) = n\)) and algebraic multiplicity \(n\) (As the characteristic polynomial of \(I\), \(P(z) = (z - 1)(z - 1) \dots (z - 1)\) with that repeated \(n\) times).

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | A matrix has a <b>complete set of real eigenvectors</b> if {{c1::all its eigenvalues are real and the geometric multiplicities are the same as the algebraic multiplicities of all it's eigenvalues |

A matrix has a <b>complete set of real eigenvectors</b> if {{c1::all its eigenvalues are real and the geometric multiplicities are the same as the algebraic multiplicities of all it's eigenvalues::in terms of multiplicities}}. |

Note 12: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

qn2vol8}8V

Before

Front

Every symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has a real eigenvalue \(\lambda\).

Back

Every symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has a real eigenvalue \(\lambda\).

After

Front

Every symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has a real eigenvalue \(\lambda\).

Back

Every symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has a real eigenvalue \(\lambda\).

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Every symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has {{c1::a real eigenvalue \(\lambda\):: |

Every symmetric matrix \(A \in \mathbb{R}^{n \times n}\) has {{c1::a real eigenvalue \(\lambda\)::existence}}. |

Note 13: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

qr+Ln*lsd_

Before

Front

Any symmetric matrix has only real eigenvalues.

Back

Any symmetric matrix has only real eigenvalues.

After

Front

Any symmetric matrix has only real eigenvalues.

Back

Any symmetric matrix has only real eigenvalues.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Any symmetric matrix has {{c1:: |

Any symmetric matrix has {{c1::only real eigenvalues::fact about the EWs}}. |

Note 14: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

qs3_-P{w4Q

Before

Front

Similar matrices \(A\) and \(B = S^{-1}AS\) have the same eigenvalues .

Back

Similar matrices \(A\) and \(B = S^{-1}AS\) have the same eigenvalues .

After

Front

Similar matrices \(A\) and \(B = S^{-1}AS\) have the same eigenvalues.

Back

Similar matrices \(A\) and \(B = S^{-1}AS\) have the same eigenvalues.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Similar matrices \(A\) and \(B = S^{-1}AS\) have {{c1::the same eigenvalues |

Similar matrices \(A\) and \(B = S^{-1}AS\) have {{c1::the same eigenvalues::shared property}}. |

Note 15: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

s,(|c|R^[@

Before

Front

- If \(m = n\) (\(A\) is square), the system \(Ax = b\) is called square. Typically solvable.

- If \(m < n\) (A is a wide matrix), the system \(Ax = b\) is called underdetermined. These are typically solvable.

- If \(m > n\) (A is a tall matrix) the system \(Ax = b\) is called overdetermined. Typically not solvable.

Back

- If \(m = n\) (\(A\) is square), the system \(Ax = b\) is called square. Typically solvable.

- If \(m < n\) (A is a wide matrix), the system \(Ax = b\) is called underdetermined. These are typically solvable.

- If \(m > n\) (A is a tall matrix) the system \(Ax = b\) is called overdetermined. Typically not solvable.

(Undetermined because there are more variables than equations.)

After

Front

- If \(m = n\) (\(A\) is square), the system \(Ax = b\) is called square. Typically solvable.

- If \(m < n\) (A is a wide matrix), the system \(Ax = b\) is called underdetermined. These are typically solvable.

- If \(m > n\) (A is a tall matrix) the system \(Ax = b\) is called overdetermined. Typically not solvable.

Back

- If \(m = n\) (\(A\) is square), the system \(Ax = b\) is called square. Typically solvable.

- If \(m < n\) (A is a wide matrix), the system \(Ax = b\) is called underdetermined. These are typically solvable.

- If \(m > n\) (A is a tall matrix) the system \(Ax = b\) is called overdetermined. Typically not solvable.

(Undetermined because there are more variables than equations.)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <ol><li>If \(m = n\) (\(A\) is square), the system \(Ax = b\) is called square. Typically solvable. </li><li>If \(m < n\) (A is a wide matrix), the system \(Ax = b\) is called {{c1::underdetermined}}. These are {{c1::typically solvable:: |

<ol><li>If \(m = n\) (\(A\) is square), the system \(Ax = b\) is called square. Typically solvable. </li><li>If \(m < n\) (A is a wide matrix), the system \(Ax = b\) is called {{c1::underdetermined}}. These are {{c1::typically solvable::solvability}}. </li><li>If \(m > n\) (A is a tall matrix) the system \(Ax = b\) is called {{c2::overdetermined}}. Typically {{c2::not solvable::solvability}}.</li></ol> |

Note 16: ETH::LinAlg

Deck: ETH::LinAlg

Note Type: Horvath Cloze

GUID:

modified

Note Type: Horvath Cloze

GUID:

tQtZJZ|Ls+

Before

Front

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\).

Both matrices are symmetric and PSD.

Proof Included

Back

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\).

Both matrices are symmetric and PSD.

Proof Included

Proof \(G = AA^\top\) and \(G = A^\top A\) are PSD.

- \(x^\top G x = x^\top (A^\top A ) x = (Ax)^\top (Ax) = ||Ax||^2 \geq 0\)

- \(x^\top G x = x^\top AA^\top x = (A^\top x)^\top (A^\top x) = ||A^\top x||^2 \geq 0\)

After

Front

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones as of \(AA^\top\).

Both matrices are symmetric and PSD.

Proof Included

Back

Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones as of \(AA^\top\).

Both matrices are symmetric and PSD.

Proof Included

Proof \(G = AA^\top\) and \(G = A^\top A\) are PSD.

- \(x^\top G x = x^\top (A^\top A ) x = (Ax)^\top (Ax) = ||Ax||^2 \geq 0\)

- \(x^\top G x = x^\top AA^\top x = (A^\top x)^\top (A^\top x) = ||A^\top x||^2 \geq 0\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <div>Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones of \(AA^\top\).</div><div>Both matrices are {{c3::<em>symmetric</em> and <i>PSD</i>}}.</div><div><i>Proof Included</i><br></div> | <div>Given a real matrix \(A \in \mathbb{R}^{n \times n}\), the non-zero eigenvalues of \(A^\top A\) are the same ones as of \(AA^\top\).</div><div><br></div><div>Both matrices are {{c3::<em>symmetric</em> and <i>PSD</i>}}.</div><div><i>Proof Included</i><br></div> |