Die bedingte Wahrscheinlichkeit \(\Pr[A|B]\) von \(A\) gegeben \(B\) ist definiert durch \[\Pr[A|B] := {{c1::\frac{\Pr[A \cap B]}{\Pr[B]} }}.\]

Note 1: ETH::2. Semester::A&W

Note Type: Horvath Cloze

GUID:

A-7$#*Ia_p

Previous

Note did not exist

New Note

Front

Die bedingte Wahrscheinlichkeit \(\Pr[A|B]\) von \(A\) gegeben \(B\) ist definiert durch \[\Pr[A|B] := {{c1::\frac{\Pr[A \cap B]}{\Pr[B]} }}.\]

Back

Die bedingte Wahrscheinlichkeit \(\Pr[A|B]\) von \(A\) gegeben \(B\) ist definiert durch \[\Pr[A|B] := {{c1::\frac{\Pr[A \cap B]}{\Pr[B]} }}.\]

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Seien \(A\) und \(B\) Ereignisse mit \(\Pr[B] > 0\). <br><br> Die bedingte Wahrscheinlichkeit \(\Pr[A|B]\) von \(A\) gegeben \(B\) ist definiert durch \[\Pr[A|B] := {{c1::\frac{\Pr[A \cap B]}{\Pr[B]} }}.\] |

Note 2: ETH::2. Semester::A&W

Note Type: Horvath Cloze

GUID:

I(y+n3Ez0R

Previous

Note did not exist

New Note

Front

Dann gilt: \[\Pr[B] = {{c1::\sum_{i=1}^{n} \Pr[B|A_i] \cdot \Pr[A_i]}}.\]

Back

Dann gilt: \[\Pr[B] = {{c1::\sum_{i=1}^{n} \Pr[B|A_i] \cdot \Pr[A_i]}}.\]

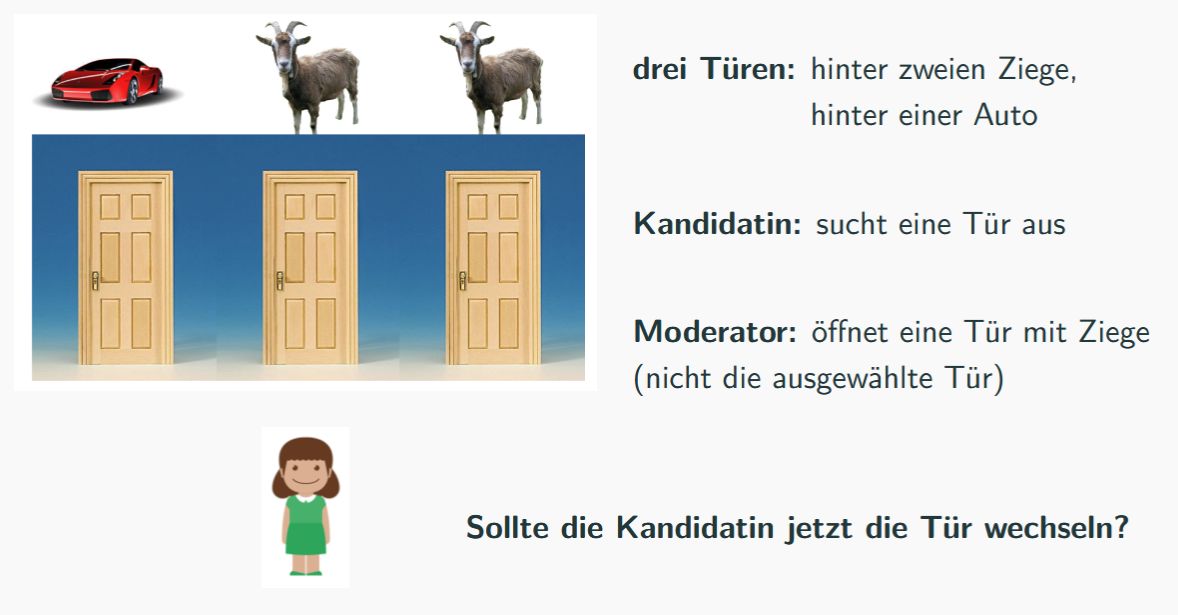

Beispiel: Ziegenproblem

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Seien \(A_1, \ldots, A_n\) paarweise disjunkte Ereignisse und sei \(B \subseteq A_1 \cup \cdots \cup A_n\). <br><br> Dann gilt: \[\Pr[B] = {{c1::\sum_{i=1}^{n} \Pr[B|A_i] \cdot \Pr[A_i]}}.\] | |

| Extra | Satz von der totalen Wahrscheinlichkeit<br><br><b>Beispiel: Ziegenproblem</b><br><img src="paste-fe1bd74214a82f2008614d31f66a80c281f1f024.jpg"> |

Note 3: ETH::2. Semester::A&W

Note Type: Horvath Cloze

GUID:

htE!5%59G$

Previous

Note did not exist

New Note

Front

Falls \(\Pr[A_1 \cap \cdots \cap A_n] > 0\), so gilt: \[\Pr[A_1 \cap \cdots \cap A_n] = {{c1::\Pr[A_1] \cdot \Pr[A_2|A_1] \cdot \Pr[A_3|A_1 \cap A_2] \cdots \Pr[A_n|A_1 \cap \cdots \cap A_{n-1}]}}.\]

Back

Falls \(\Pr[A_1 \cap \cdots \cap A_n] > 0\), so gilt: \[\Pr[A_1 \cap \cdots \cap A_n] = {{c1::\Pr[A_1] \cdot \Pr[A_2|A_1] \cdot \Pr[A_3|A_1 \cap A_2] \cdots \Pr[A_n|A_1 \cap \cdots \cap A_{n-1}]}}.\]

Beispiel: Geburtstagsproblem

Betrachte einen Raum mit \(m\) Personen.

Was ist die Wahrscheinlichkeit, dass zwei Personen am gleichen Tag Geburtstag haben?

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Seien \(A_1, \ldots, A_n\) Ereignisse. <br><br> Falls \(\Pr[A_1 \cap \cdots \cap A_n] > 0\), so gilt: \[\Pr[A_1 \cap \cdots \cap A_n] = {{c1::\Pr[A_1] \cdot \Pr[A_2|A_1] \cdot \Pr[A_3|A_1 \cap A_2] \cdots \Pr[A_n|A_1 \cap \cdots \cap A_{n-1}]}}.\] | |

| Extra | Multiplikationssatz<br><br><b>Beispiel: Geburtstagsproblem</b><br>Betrachte einen Raum mit \(m\) Personen.<br>Was ist die Wahrscheinlichkeit, dass zwei Personen am gleichen Tag Geburtstag haben? |

Note 4: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

Am

Previous

Note did not exist

New Note

Front

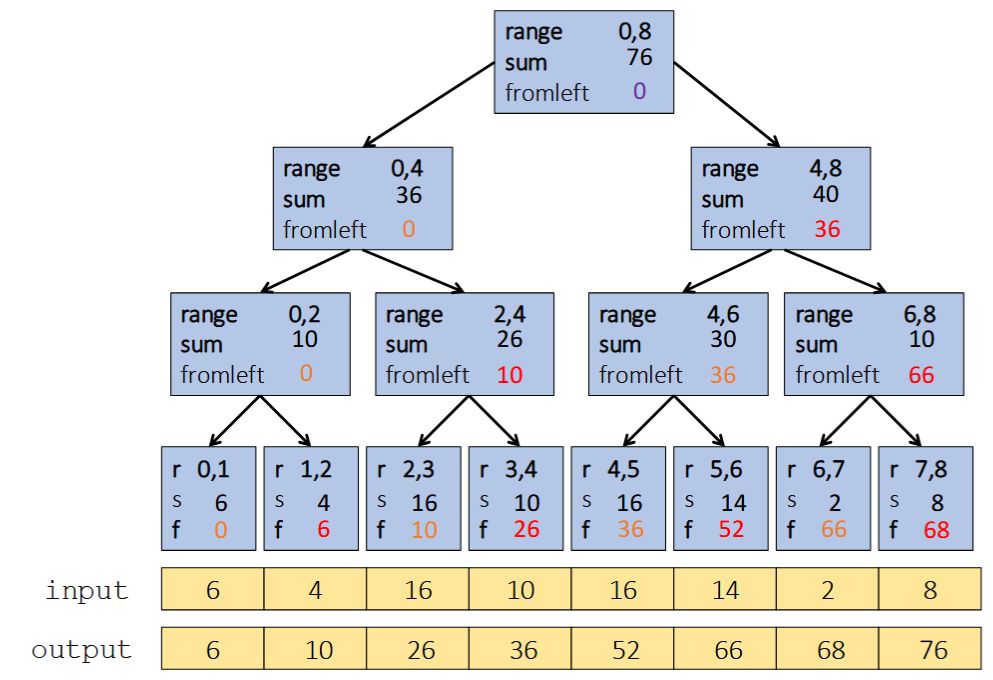

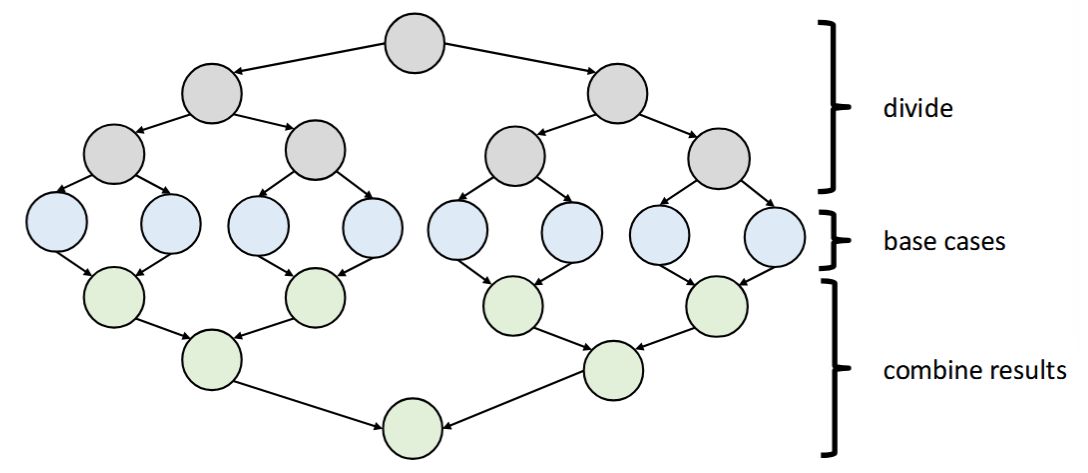

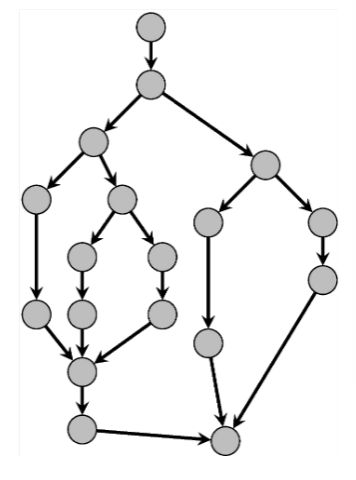

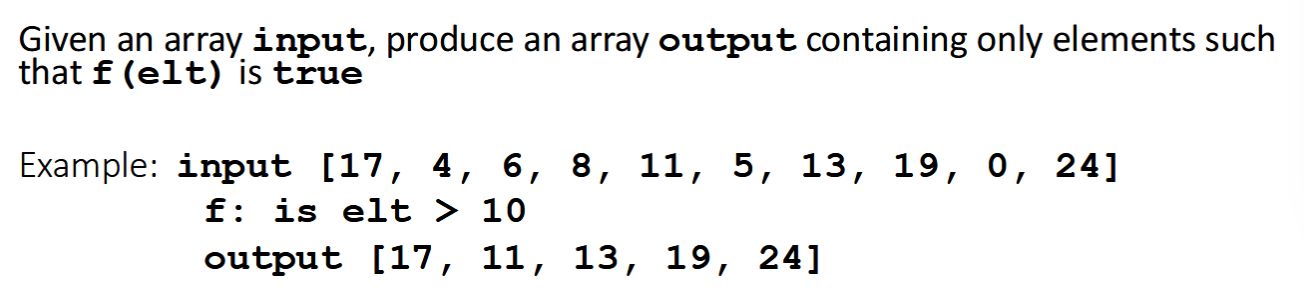

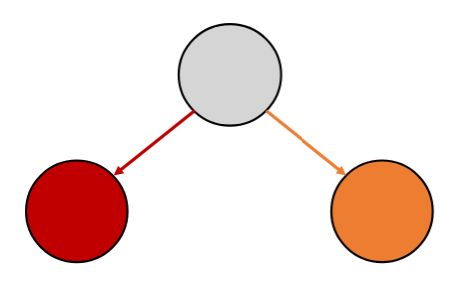

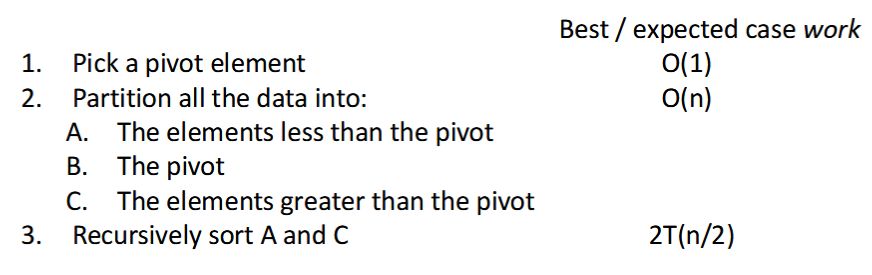

The parallel-prefix algorithm does two passes:

- First pass builds a tree bottom-up: the "up" pass

- Second pass traverses the tree top-down: the "down" pass

Back

The parallel-prefix algorithm does two passes:

- First pass builds a tree bottom-up: the "up" pass

- Second pass traverses the tree top-down: the "down" pass

So in total there is \(O(n)\) work and \(O(\log n)\) span

So like with array summing, parallelism is \(n / \log n\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <b>Parallel prefix-sum</b> <br><br> The parallel-prefix algorithm does two passes:<br><ol><li> First pass {{c1::builds a tree bottom-up: the "up" pass}}</li><li> Second pass {{c1::traverses the tree top-down: the "down" pass}}</li></ol> | |

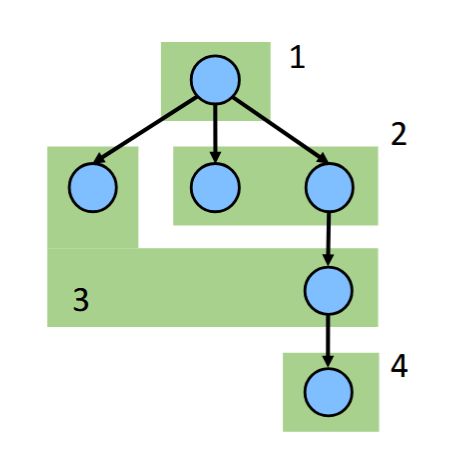

| Extra | Each pass has \(O(n)\) work and \(O(\log n)\) span<br>So in total there is \(O(n)\) work and \(O(\log n)\) span<br>So like with array summing, parallelism is \(n / \log n\)<br><br><div><img src="paste-c29833901d0bef4bf829b08f15583acc2ac94c68.jpg"><br></div> |

Note 5: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

DFp@RgOWZ7

Previous

Note did not exist

New Note

Front

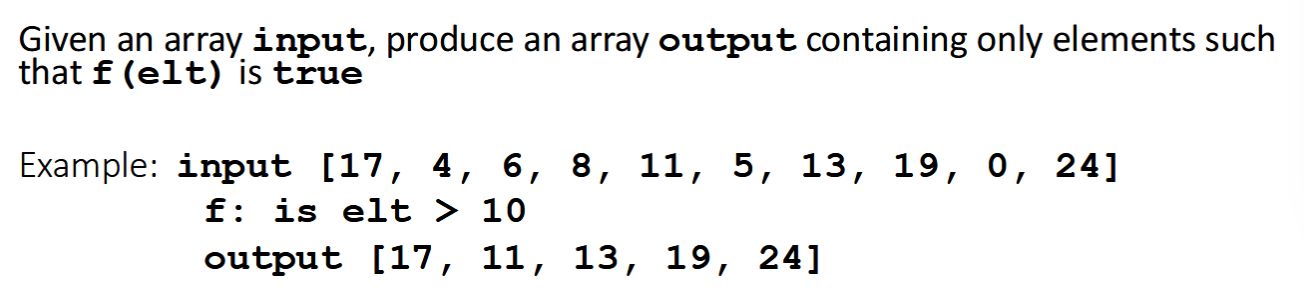

- How much parallelism exist in the task graph

- Gives an upper bound on how much speedup we can get

Back

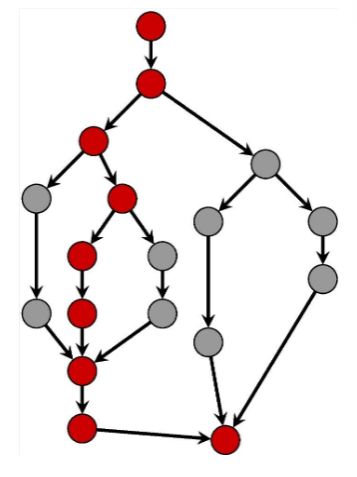

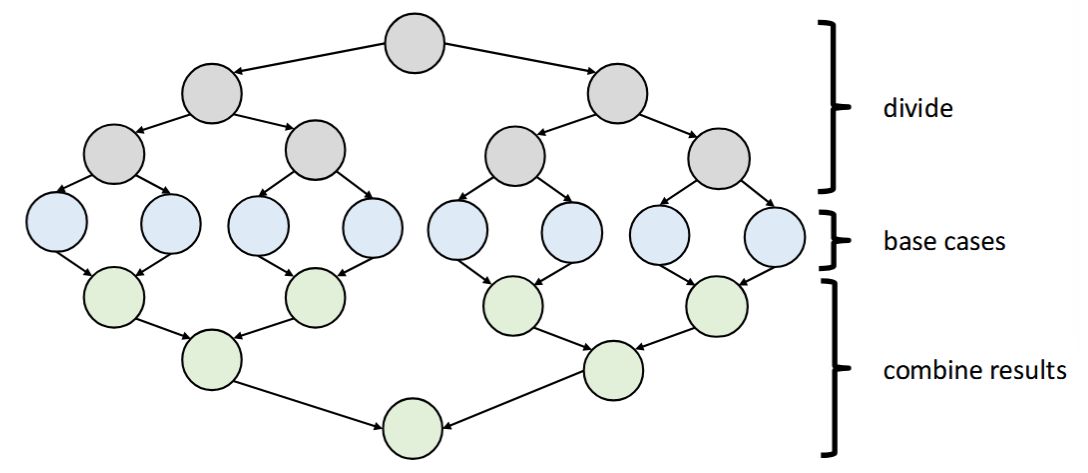

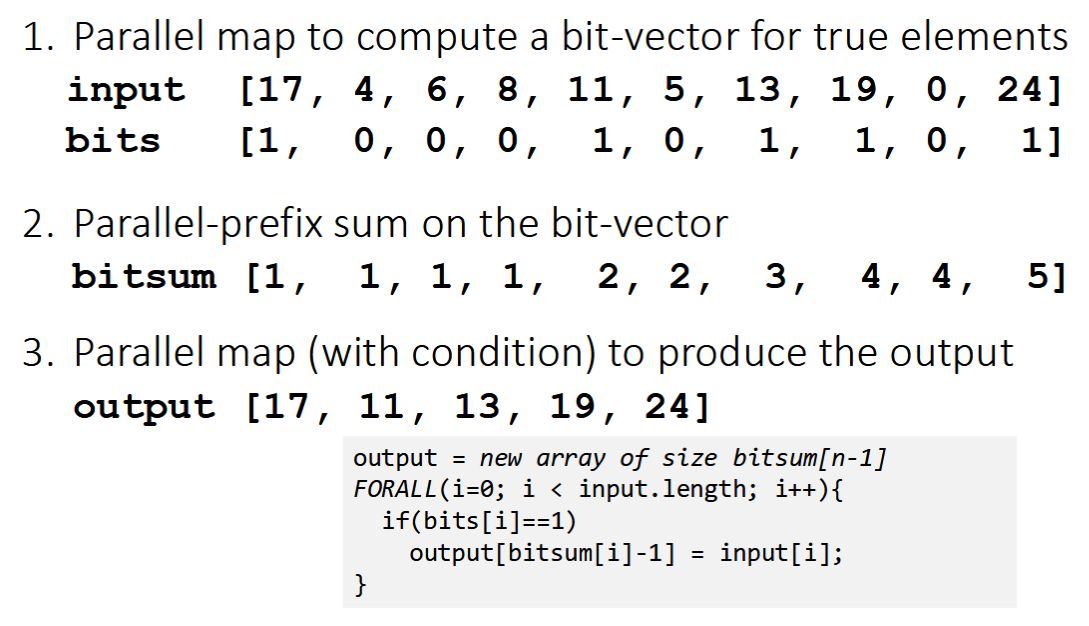

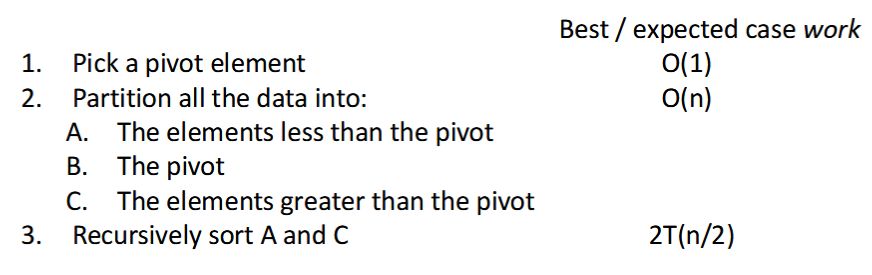

- How much parallelism exist in the task graph

- Gives an upper bound on how much speedup we can get

On this graph: \(T_1 / T_\infty = 18 / 9 = 2\)

On this graph: \(T_1 / T_\infty = 18 / 9 = 2\)

This means that even with infinite cores and threads, the best possible speedup is 2.

Bottleneck is the span – DAG structure does not allow more parallelism.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

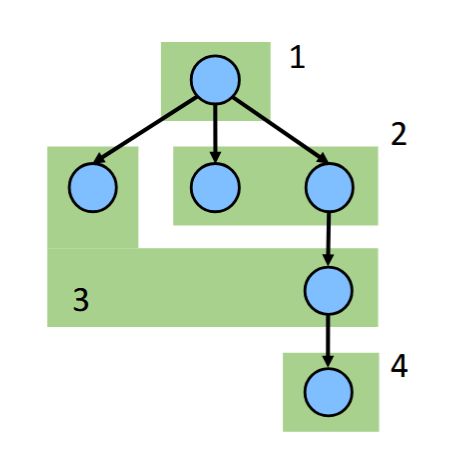

| Text | Parallelism in DAG = \({{c1::T_1 / T_\infty}}\) <ul> <li>How much parallelism exist in the task graph</li> <li>Gives an upper bound on how much speedup we can get</li> </ul> | |

| Extra | <img src="paste-ec818a1d799c2a19ea6cad709291b848ccaa132c.jpg" style="float: right;">On this graph: \(T_1 / T_\infty = 18 / 9 = 2\) <br><br> This means that even with infinite cores and threads, the best possible speedup is 2.<br><br> Bottleneck is the span – DAG structure does not allow more parallelism. |

Note 6: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

I>a

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Reduction always achieve \(O({{c1::\log n}})\) parallel time. |

Note 7: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

IExk};lu~_

Before

Front

Back

After

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | A {{c1::map}} operates on {{c2::each element of a collection independently}} to create |

A {{c1::map}} operates on {{c2::each element of a collection independently}} to create {{c2::a new collection of the same size}}. |

| Extra | For instance vector addition that computes the sum of a collection of tuples (containing the nth element of both vectors). | For instance vector addition that computes the sum of a collection of tuples (containing the n-th element of both vectors). |

Note 8: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

IU~eZh[{|t

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

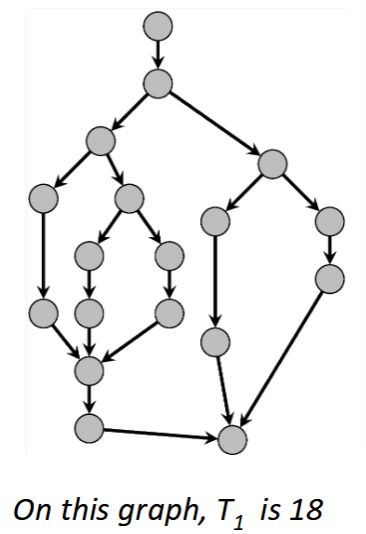

| Text | \(T_1\) in DAG: <strong>work</strong> (total amount of work) = {{c1::The sum of the time cost of all nodes in graph}} | |

| Extra | (As if we executed graph sequentially (\(p=1\)).)<br><br><img src="paste-cc5039f2b639a958b8652ea182decb023fb2b3fa.jpg"> |

Note 9: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

MOPb-=%nM*

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | The ForkJoin Framework gives an expected-time guarantee of {{c1::asymptotically optimal}}! |

Note 10: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID:

N&-I&(}AW,

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Front | What is \(T_2\) for this graph?<br><br><img src="paste-efb4078bb29155e5f01b879ea926a36846ce956f.jpg"> | |

| Back | \(T_2\) will be 4 with this scheduling (we have 4 time steps) |

Note 11: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

N]|Ip8DGl(

Previous

Note did not exist

New Note

Front

Back

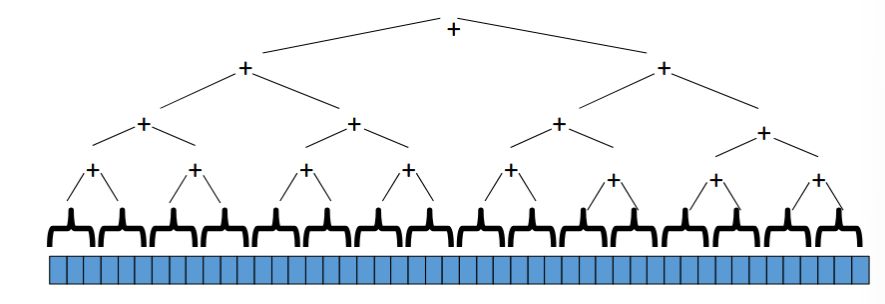

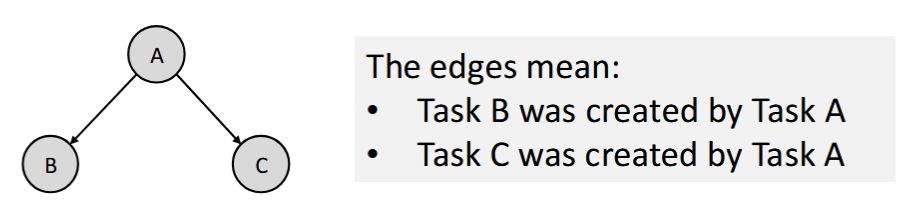

Nodes: Units of work

Nodes: Units of work- FJ: tasks; Cilk: finer-grained strands

- Source must finish before destination starts (logical completion, not immediate termination)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

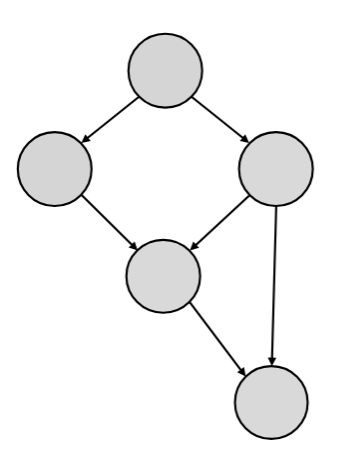

| Text | A program execution using fork and join can be seen as a {{c1::DAG}}. | |

| Extra | <img src="paste-5f03284709dc83297ef59e92187f035b0919be20.jpg" style="float: right;">Nodes: Units of work<br><ul><li>FJ: tasks; Cilk: finer-grained strands</li></ul>Edges: Dependencies<br><ul><li>Source must finish before destination starts (logical completion, not immediate termination)</li></ul><div>The DAG does not depict "who is running when", but rather "who must finish before what"<br></div> |

Note 12: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

O8.pdZ.QQ~

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Maps achieve \(O({{c1::\log n}})\) | |

| Extra | via Divide & Conquer |

Note 13: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

PNcAc+sAUB

Previous

Note did not exist

New Note

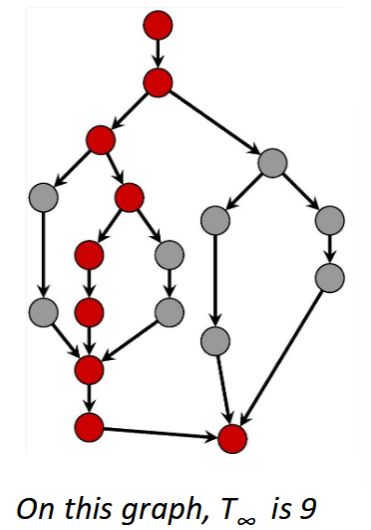

Front

- One from the forked task that just finished

- One from the continuation of the original task (which was waiting for the forked task to complete)

Back

- One from the forked task that just finished

- One from the continuation of the original task (which was waiting for the forked task to complete)

The continuation does not execute until both branches leading into the join node are complete.

Field-by-field Comparison

| Field | Before | After |

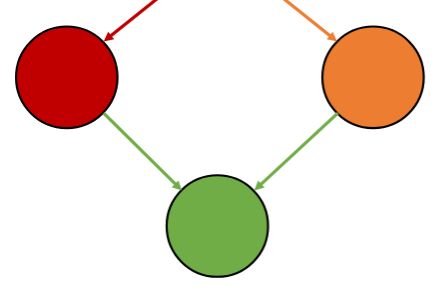

|---|---|---|

| Text | A join after a fork creates a node with two incoming edges:<br><ul><li>One from {{c1::the forked task that just finished}}</li><li>One from {{c2::the continuation of the original task (which was waiting for the forked task to complete)}}</li></ul> | |

| Extra | <img src="paste-1ce0eec3f1632895953635ebfd77d792ac16328e.jpg"><br><br>The continuation does not execute until both branches leading into the join node are complete. |

Note 14: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID:

RiW}{]O2lc

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Front | What is this?<br><br><img src="paste-3f79db399d4701fc72b0abef0f7f3c31514ad444.jpg"> | |

| Back | Fork-Join. |

Note 15: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

bHemC#>81}

Previous

Note did not exist

New Note

Front

- A wide task graph → higher potential parallelism (shorter \(T_\infty\))

- A deep task graph → more sequential dependencies (longer \(T_\infty\), less speedup)

Back

- A wide task graph → higher potential parallelism (shorter \(T_\infty\))

- A deep task graph → more sequential dependencies (longer \(T_\infty\), less speedup)

Parallelism is limited by dependencies.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Intuition from the DAG:<br><ul><li>A wide task graph → {{c1::higher potential parallelism (shorter \(T_\infty\))}}</li><li> A deep task graph → {{c1::more sequential dependencies (longer \(T_\infty\), less speedup)}}</li></ul> | |

| Extra | Speedup is limited by the ratio \(T_1 / T_\infty\) (work versus critical path → Amdahl's law).<br><br> Parallelism is limited by dependencies. |

Note 16: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

cl@Oy)/+yX

Previous

Note did not exist

New Note

Front

Back

- Amdahl's Law describes the limit of speedup due to sequential parts of a program

- \(T_\infty\) (span) in the DAG is the practical representation of the "sequential fraction" in Amdahl's Law

- \(T_\infty\) is the fundamental cause of the speedup limit - it represents the longest sequential dependency

- If we reduce \(T_\infty\), we get closer to ideal speedup

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Designing parallel algorithms is about {{c1::decreasing span without increasing work too much}}. | |

| Extra | <ol><li>Amdahl's Law describes the limit of speedup due to sequential parts of a program</li> <li>\(T_\infty\) (span) in the DAG is the practical representation of the "sequential fraction" in Amdahl's Law</li> <li>\(T_\infty\) is the fundamental cause of the speedup limit - it represents the longest sequential dependency</li> <li>If we reduce \(T_\infty\), we get closer to ideal speedup</li></ol> |

Note 17: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

ho0vHdt>P=

Previous

Note did not exist

New Note

Front

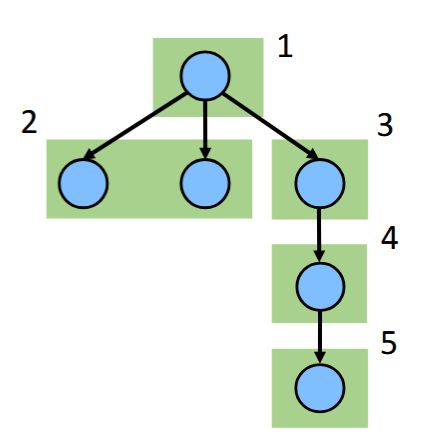

- Sequential: \(O(n)\) (= \(T_1\))

- Parallel: \(O(\log n)\) (= \(T_\infty\), span)

- Parallelism: \(O(n / \log n)\)

Back

- Sequential: \(O(n)\) (= \(T_1\))

- Parallel: \(O(\log n)\) (= \(T_\infty\), span)

- Parallelism: \(O(n / \log n)\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Summing an array: <ul> <li>Sequential: \(O(n)\) (= \(T_1\))</li> <li>Parallel: \(O(\log n)\) (= \(T_\infty\), span)</li> <li>Parallelism: \(O({{c1::n / \log n}})\)</li> </ul> | |

| Extra | <img src="paste-8fcca64d16a4056baa4d178ffd68b0a3f33dc2a2.jpg"> |

Note 18: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID:

hysV}fw8F.

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Front | What is \(T_2\) for this graph?<br><br><img src="paste-954e8266e90e2ada1c35e6797835e04782c95786.jpg"> | |

| Back | \(T_2\) will be 5 with this scheduling (we have 5 time steps) |

Note 19: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

m+e%70x&jD

Previous

Note did not exist

New Note

Front

- We can control p by configuring the thread pool (

ForkJoinPool(p)) - We cannot control \(T_p\) - it depends on:

- Task dependencies in the DAG

- Work distribution and load balancing

- Thread contention and scheduling by the JVM/OS

Back

- We can control p by configuring the thread pool (

ForkJoinPool(p)) - We cannot control \(T_p\) - it depends on:

- Task dependencies in the DAG

- Work distribution and load balancing

- Thread contention and scheduling by the JVM/OS

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | \(T_p\) in DAG: execution time on p threads <ul> <li>We can control p by {{c1::configuring the thread pool (<code>ForkJoinPool(p)</code>)}}</li> <li>We cannot control \(T_p\) - it depends on: <ul> <li>{{c2::Task dependencies in the DAG}}</li> <li>{{c2::Work distribution and load balancing}}</li> <li>{{c2::Thread contention and scheduling by the JVM/OS}}</li> </ul> </li> </ul> | |

| Extra | <img src="paste-125fa8fd92151f941d37430303d0eece6fee7355.jpg"> |

Note 20: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

n1QrJ:]#vj

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | FJ work stealing scheduler: \(T_p = {{c1::O(T_1 / p + T_\infty)}}\) |

Note 21: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

nZp7%Bxh_j

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | For parallelism, balanced trees are generally {{c1::better::better/worse}} than lists so that {{c1::we can get to all the data exponentially faster (\(O(\log n)\) vs. \(O(n)\))}}. | |

| Extra | Trees have the same flexibility as lists compared to arrays. |

Note 22: ETH::2. Semester::PProg

Note Type: Horvath Occlusio

GUID:

n^z!&uCe{+

Previous

Note did not exist

New Note

Front

Back

- Just using a different base case for the prefix sum

- No effect on asymptotic complexity

- Again no effect on asymptotic complexity

- 2 or 3 passes, but 3 is a constant

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

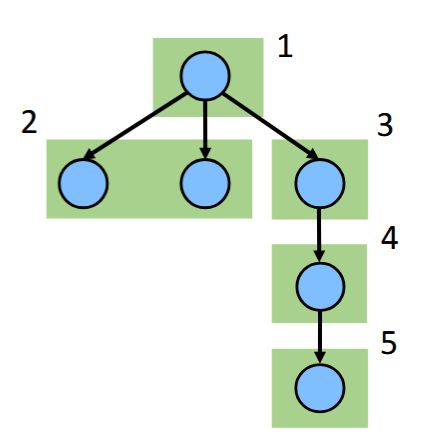

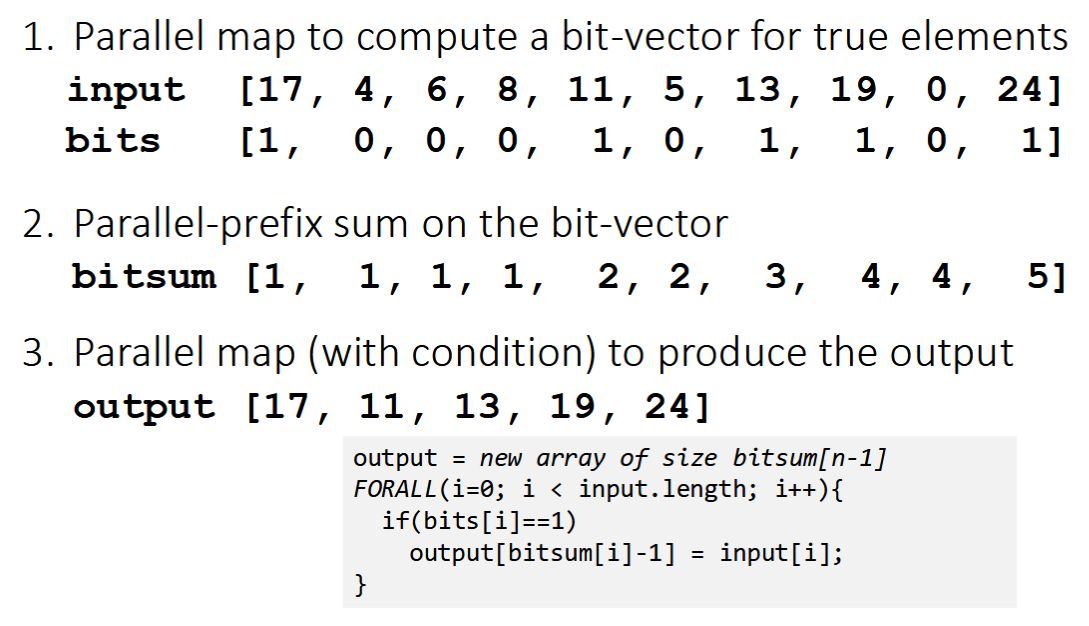

| Occlusion | {{c1::image-occlusion:rect:left=.057:top=.0000:width=.9345:height=.9956}}<br>{{c3::image-occlusion:rect:left=.057:top=.515:width=.9323:height=.4768}}<br>{{c2::image-occlusion:rect:left=.057:top=.309:width=.9345:height=.6905}}<br> | |

| Image | <img src="paste-72833918aa508ef6829e1acb81a9c42f64683e8e.jpg"> | |

| Header | <b>Pack<br></b><img src="paste-73d6ad67e165e0912c727f94b284851f7906443d.jpg"><b><br></b> | |

| Back Extra | First two steps can be combined into one pass <ul> <li>Just using a different base case for the prefix sum</li> <li>No effect on asymptotic complexity</li> </ul> Can also combine third step into the down pass of the prefix sum <ul> <li>Again no effect on asymptotic complexity</li> </ul> Analysis: \(O(n)\) work, \(O(\log n)\) span <ul> <li>2 or 3 passes, but 3 is a constant</li> </ul> |

Note 23: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

o3b/S}:de9

Previous

Note did not exist

New Note

Front

Lower bound:

- Work law: \(T_p \geq T_1 / p\)

- Span law: \(T_p \geq T_\infty\)

- \(T_p \geq \max(T_1 / p,\ T_\infty)\) -> no scheduler can do better than this

Back

Lower bound:

- Work law: \(T_p \geq T_1 / p\)

- Span law: \(T_p \geq T_\infty\)

- \(T_p \geq \max(T_1 / p,\ T_\infty)\) -> no scheduler can do better than this

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <b>DAG bounds to execution time</b> <br><br> Lower bound: <ul> <li>Work law: \(T_p \geq {{c1::T_1 / p}}\)</li> <li>Span law: \(T_p \geq{{c2:: T_\infty}}\)</li> <li>\(T_p \geq {{c3::\max(T_1 / p,\ T_\infty)}}\) -> no scheduler can do better than this</li> </ul> |

Note 24: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

p%2wWlRz`X

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | \(T_\infty\) in DAG: span, critical path length ={{c1::Longest chain of dependent operations in the DAG}} | |

| Extra | Best possible time using infinite cores.<br><br><img src="paste-0aec79257628a646eb122ec687c1502a3a7b665e.jpg"> |

Note 25: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID:

ph|&WP$lM$

Previous

Note did not exist

New Note

Front

- The elements less than the pivot

- The pivot

- The elements greater than the pivot

Back

- The elements less than the pivot

- The pivot

- The elements greater than the pivot

- We know a pack is \(O(n)\) work, \(O(\log n)\) span

- Pack elements less than pivot into left side of

auxarray - Pack elements greater than pivot into right side of

auxarray - Put pivot between them and recursively sort

- With a little more cleverness, can do both packs at once but no effect on asymptotic complexity

Parallelism is \(O(n \log n) / O(\log^2 n) = O(n / \log n)\).

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Front | For Quicksort, we partition all the data into: <ol type="A"> <li>The elements less than the pivot</li> <li>The pivot</li> <li>The elements greater than the pivot</li> </ol><div>How can this be parallelized?</div> | |

| Back | This is just two packs! <ul> <li>We know a pack is \(O(n)\) work, \(O(\log n)\) span</li> <li>Pack elements less than pivot into left side of <code>aux</code> array</li> <li>Pack elements greater than pivot into right side of <code>aux</code> array</li> <li>Put pivot between them and recursively sort</li> <li>With a little more cleverness, can do both packs at once but no effect on asymptotic complexity</li> </ul> With \(O(\log n)\) span for partition, the total span for quicksort is \(T(n) = O(\log n) + 1T(n/2) = O(\log^2 n)\).<br> Parallelism is \(O(n \log n) / O(\log^2 n) = O(n / \log n)\). |

Note 26: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

q>;I#@SD]P

Previous

Note did not exist

New Note

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | The DAG captures task dependencies and potential parallelism but does not tell us {{c1::actual execution time}}. |

Note 27: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

s!p!];uFf;

Previous

Note did not exist

New Note

Front

Upper bound:

- Parallelizable work: \(T_1 / p\)

- DAG dependencies: \(O(T_\infty)\)

- \(T_p \leq T_1 / p + O(T_\infty)\)

Back

Upper bound:

- Parallelizable work: \(T_1 / p\)

- DAG dependencies: \(O(T_\infty)\)

- \(T_p \leq T_1 / p + O(T_\infty)\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | <b>DAG bounds to execution time<br></b><br>Upper bound: <ul> <li>Parallelizable work: \({{c1::T_1 / p}}\)</li> <li>DAG dependencies: \({{c2::O(T_\infty)}}\)</li> <li>\(T_p \leq {{c3::T_1 / p + O(T_\infty)}}\)</li> </ul> |

Note 28: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

v[!T>xgF*P

Previous

Note did not exist

New Note

Front

- One to a new forked task

- One to the continuation of the current task

Back

- One to a new forked task

- One to the continuation of the current task

May execute in parallel if worker thread is available.

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | A fork ends a node and creates two outgoing edges:<br><ul><li>One to {{c1::a new forked task}}</li><li>One to {{c1::the continuation of the current task}}</li></ul> | |

| Extra | <img src="paste-cea411eff84adb49b099f7aa3bad8e51a3f80d02.jpg"><br><br>May execute in parallel if worker thread is available. |

Note 29: ETH::2. Semester::PProg

Note Type: Horvath Classic

GUID:

v{zd#AU<}W

Previous

Note did not exist

New Note

Front

Back

- Work: unchanged of course \(O(n \log n)\)

- Span: now \(T(n) = O(n) + 1T(n/2) = O(n)\)

- So parallelism (i.e., work / span) is \(O(\log n)\)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Front | How can we parallelize Quicksort?<br><br><img src="paste-2e1c682cea6540c1e39b1511df2d33cbcc697698.jpg"> | |

| Back | Easy: Do the two recursive calls in parallel <ul> <li>Work: unchanged of course \(O(n \log n)\)</li> <li>Span: now \(T(n) = O(n) + 1T(n/2) = O(n)\)</li> <li>So parallelism (i.e., work / span) is \(O(\log n)\)</li> </ul> |

Note 30: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

wCX~7qsg+^

Previous

Note did not exist

New Note

Front

Back

Maps & Reductions

1971, colorized

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Maps and reductions are the "{{c1::work horses}}" of parallel programming. | |

| Extra | <img src="ang6c4.jpg"><br>Maps & Reductions<br>1971, colorized |

Note 31: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

xKm4/Le-}J

Before

Front

Back

After

Front

Back

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | {{c1::Reductions}} produce |

{{c1::Reductions}} produce {{c2::a single answer from a collection via an associative operator}}. |

| Extra | Examples: max, count, rightmost, sum. |

Note 32: ETH::2. Semester::PProg

Note Type: Horvath Cloze

GUID:

xV0+_,SfYQ

Previous

Note did not exist

New Note

Front

- Execute code

- Spawn other tasks (= start in JT, submit in ExS, fork in FJ)

- Wait for results from other tasks (= join in JT and FJ, get in ExS)

Back

- Execute code

- Spawn other tasks (= start in JT, submit in ExS, fork in FJ)

- Wait for results from other tasks (= join in JT and FJ, get in ExS)

Field-by-field Comparison

| Field | Before | After |

|---|---|---|

| Text | Tasks:<br><ol><li>Execute code</li><li>{{c1::Spawn other tasks (= start in JT, submit in ExS, fork in FJ)}}</li><li>{{c2::Wait for results from other tasks (= join in JT and FJ, get in ExS)}}</li></ol> | |

| Extra | A graph is formed based on spawning tasks:<br><br><img src="paste-49c881948e5bf3f307be6ad2f1a2946538f4da1a.jpg"> |